Swarm Intelligence Algorithms:3つのPython実装

Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may look like chaos, but there's a hidden order. You can see the same pattern in schools of fish avoiding predators or ants finding the shortest path to food. These creatures rely on simple rules and local communication to tackle surprisingly complex tasks without central control.

That’s the magic of swarm intelligence.

We can replicate this behavior using algorithms that solve tough problems by mimicking swarm intelligence.

Particle Swarm Optimization (PSO)

Particle swarm optimization (PSO) draws its inspiration from the behavior of flocks of birds and schools of fish. In these natural systems, individuals move based on their own previous experiences and their neighbors' positions, gradually adjusting to follow the most successful members of the group. PSO applies this concept to optimization problems, where particles, called agents, move through the search space to find an optimal solution.

Compared to ACO, PSO operates in continuous rather than discrete spaces. In ACO, the focus is on pathfinding and discrete choices, while PSO is better suited for problems involving continuous variables, such as parameter tuning.

In PSO, particles explore a search space. They adjust their positions based on two main factors: their personal best-known position and the best-known position of the entire swarm. This dual feedback mechanism enables them to converge toward the global optimum.

How particle swarm optimization works

The process starts with a swarm of particles initialized randomly across the solution space. Each particle represents a possible solution to the optimization problem. As the particles move, they remember their personal best positions (the best solution they’ve encountered so far) and are attracted toward the global best position (the best solution any particle has found).

This movement is driven by two factors: exploitation and exploration. Exploitation involves refining the search around the current best solution, while exploration encourages particles to search other parts of the solution space to avoid getting stuck in local optima. By balancing these two dynamics, PSO efficiently converges on the best solution.

Particle swarm optimization Python implementation

In financial portfolio management, finding the best way to allocate assets to get the most returns while keeping risks low can be tricky. Let’s use a PSO to find which mix of assets will give us the highest return on investment.

The code below shows how PSO works for optimizing a fictional financial portfolio. It starts with random asset allocations, then tweaks them over several iterations based on what works best, gradually finding the optimal mix of assets for the highest return with the lowest risk.

import numpy as np

import matplotlib.pyplot as plt

# Define the PSO parameters

class Particle:

def __init__(self, n_assets):

# Initialize a particle with random weights and velocities

self.position = np.random.rand(n_assets)

self.position /= np.sum(self.position) # Normalize weights so they sum to 1

self.velocity = np.random.rand(n_assets)

self.best_position = np.copy(self.position)

self.best_score = float('inf') # Start with a very high score

def objective_function(weights, returns, covariance):

"""

Calculate the portfolio's performance.

- weights: Asset weights in the portfolio.

- returns: Expected returns of the assets.

- covariance: Covariance matrix representing risk.

"""

portfolio_return = np.dot(weights, returns) # Calculate the portfolio return

portfolio_risk = np.sqrt(np.dot(weights.T, np.dot(covariance, weights))) # Calculate portfolio risk (standard deviation)

return -portfolio_return / portfolio_risk # We want to maximize return and minimize risk

def update_particles(particles, global_best_position, returns, covariance, w, c1, c2):

"""

Update the position and velocity of each particle.

- particles: List of particle objects.

- global_best_position: Best position found by all particles.

- returns: Expected returns of the assets.

- covariance: Covariance matrix representing risk.

- w: Inertia weight to control particle's previous velocity effect.

- c1: Cognitive coefficient to pull particles towards their own best position.

- c2: Social coefficient to pull particles towards the global best position.

"""

for particle in particles:

# Random coefficients for velocity update

r1, r2 = np.random.rand(len(particle.position)), np.random.rand(len(particle.position))

# Update velocity

particle.velocity = (w * particle.velocity

c1 * r1 * (particle.best_position - particle.position)

c2 * r2 * (global_best_position - particle.position))

# Update position

particle.position = particle.velocity

particle.position = np.clip(particle.position, 0, 1) # Ensure weights are between 0 and 1

particle.position /= np.sum(particle.position) # Normalize weights to sum to 1

# Evaluate the new position

score = objective_function(particle.position, returns, covariance)

if score

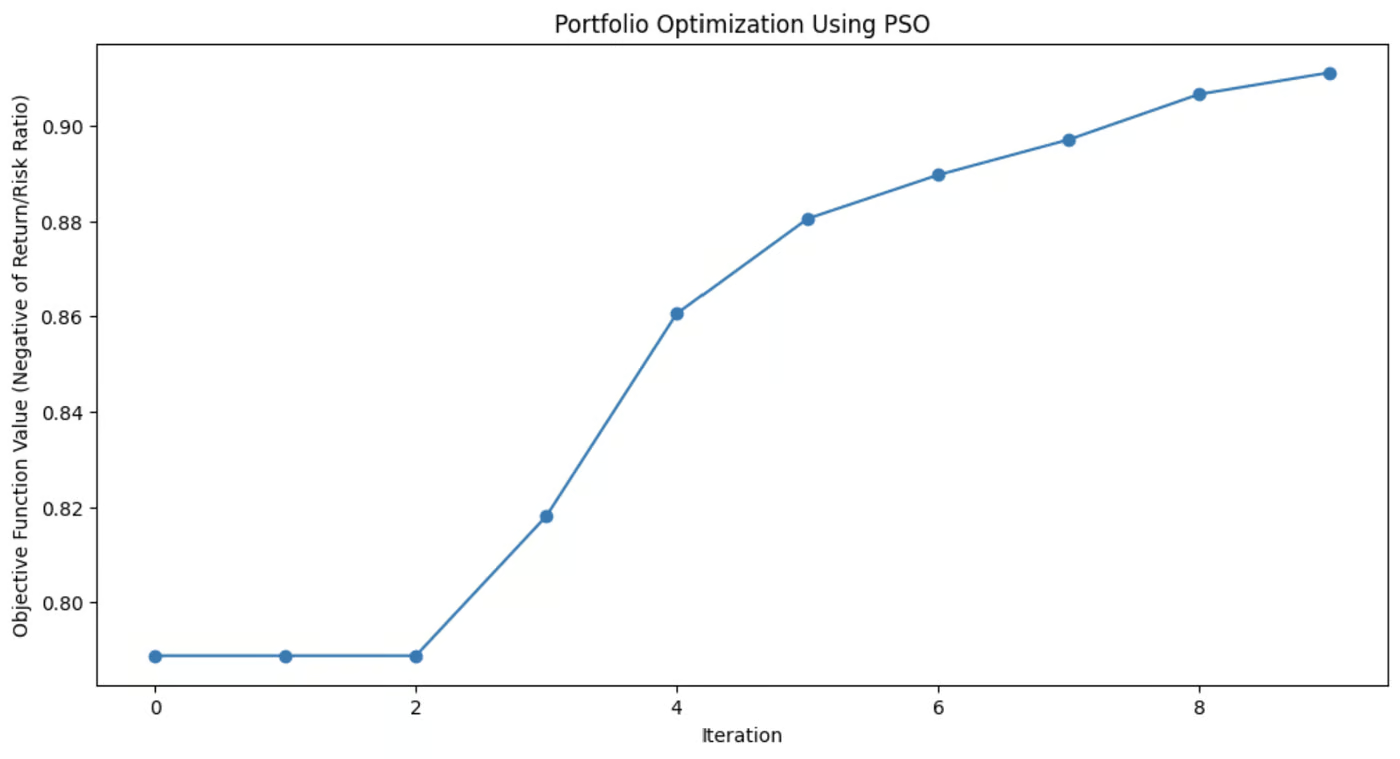

This graph demonstrates how much the PSO algorithm improved the portfolio’s asset mix with each iteration.

Applications of particle swarm optimization

PSO is used for its simplicity and effectiveness in solving various optimization problems, particularly in continuous domains. Its flexibility makes it useful for many real-world scenarios where precise solutions are needed.

These applications include:

- Machine learning: PSO can be applied to tune hyperparameters in machine learning algorithms, helping to find the best model configurations.

- Engineering design: PSO is useful for optimizing design parameters for systems like aerospace components or electrical circuits.

- Financial modeling: In finance, PSO can help in portfolio optimization, minimizing risk while maximizing returns.

PSO's ability to efficiently explore solution spaces makes it applicable across fields, from robotics to energy management to logistics.

Artificial Bee Colony (ABC)

The artificial bee colony (ABC) algorithm is modeled on the foraging behavior of honeybees.

In nature, honeybees efficiently search for nectar sources and share this information with other members of the hive. ABC captures this collaborative search process and applies it to optimization problems, especially those involving complex, high-dimensional spaces.

What sets ABC apart from other swarm intelligence algorithms is its ability to balance exploitation, focusing on refining current solutions, and exploration, searching for new and potentially better solutions. This makes ABC particularly useful for large-scale problems where global optimization is key.

How artificial bee colony works

In the ABC algorithm, the swarm of bees is divided into three specialized roles: employed bees, onlookers, and scouts. Each of these roles mimics a different aspect of how bees search for and exploit food sources in nature.

- Employed bees: These bees are responsible for exploring known food sources, representing current solutions in the optimization problem. They assess the quality (fitness) of these sources and share the information with the rest of the hive.

- Onlooker bees: After gathering information from the employed bees, onlookers select which food sources to explore further. They base their choices on the quality of the solutions shared by the employed bees, focusing more on the better options, thus refining the search for an optimal solution.

- Scout bees: When an employed bee’s food source (solution) becomes exhausted or stagnant (when no improvement is found after a certain number of iterations), the bee becomes a scout. Scouts explore new areas of the solution space, searching for potentially unexplored food sources, thus injecting diversity into the search process.

This dynamic allows ABC to balance the search between intensively exploring promising areas and broadly exploring new areas of the search space. This helps the algorithm avoid getting trapped in local optima and increases its chances of finding a global optimum.

Artificial bee colony Python implementation

The Rastrigin function is a popular problem in optimization, known for its numerous local minima, making it a tough challenge for many algorithms. The goal is simple: find the global minimum.

In this example, we’ll use the artificial bee colony algorithm to tackle this problem. Each bee in the ABC algorithm explores the search space, looking for better solutions to minimize the function. The code simulates bees that explore, exploit, and scout for new areas, ensuring a balance between exploration and exploitation.

import numpy as np

import matplotlib.pyplot as plt

# Rastrigin function: The objective is to minimize this function

def rastrigin(X):

A = 10

return A * len(X) sum([(x ** 2 - A * np.cos(2 * np.pi * x)) for x in X])

# Artificial Bee Colony (ABC) algorithm for continuous optimization of Rastrigin function

def artificial_bee_colony_rastrigin(n_iter=100, n_bees=30, dim=2, bound=(-5.12, 5.12)):

"""

Apply Artificial Bee Colony (ABC) algorithm to minimize the Rastrigin function.

Parameters:

n_iter (int): Number of iterations

n_bees (int): Number of bees in the population

dim (int): Number of dimensions (variables)

bound (tuple): Bounds for the search space (min, max)

Returns:

tuple: Best solution found, best fitness value, and list of best fitness values per iteration

"""

# Initialize the bee population with random solutions within the given bounds

bees = np.random.uniform(bound[0], bound[1], (n_bees, dim))

best_bee = bees[0]

best_fitness = rastrigin(best_bee)

best_fitnesses = []

for iteration in range(n_iter):

# Employed bees phase: Explore new solutions based on the current bees

for i in range(n_bees):

# Generate a new candidate solution by perturbing the current bee's position

new_bee = bees[i] np.random.uniform(-1, 1, dim)

new_bee = np.clip(new_bee, bound[0], bound[1]) # Keep within bounds

# Evaluate the fitness of the new solution

new_fitness = rastrigin(new_bee)

if new_fitness

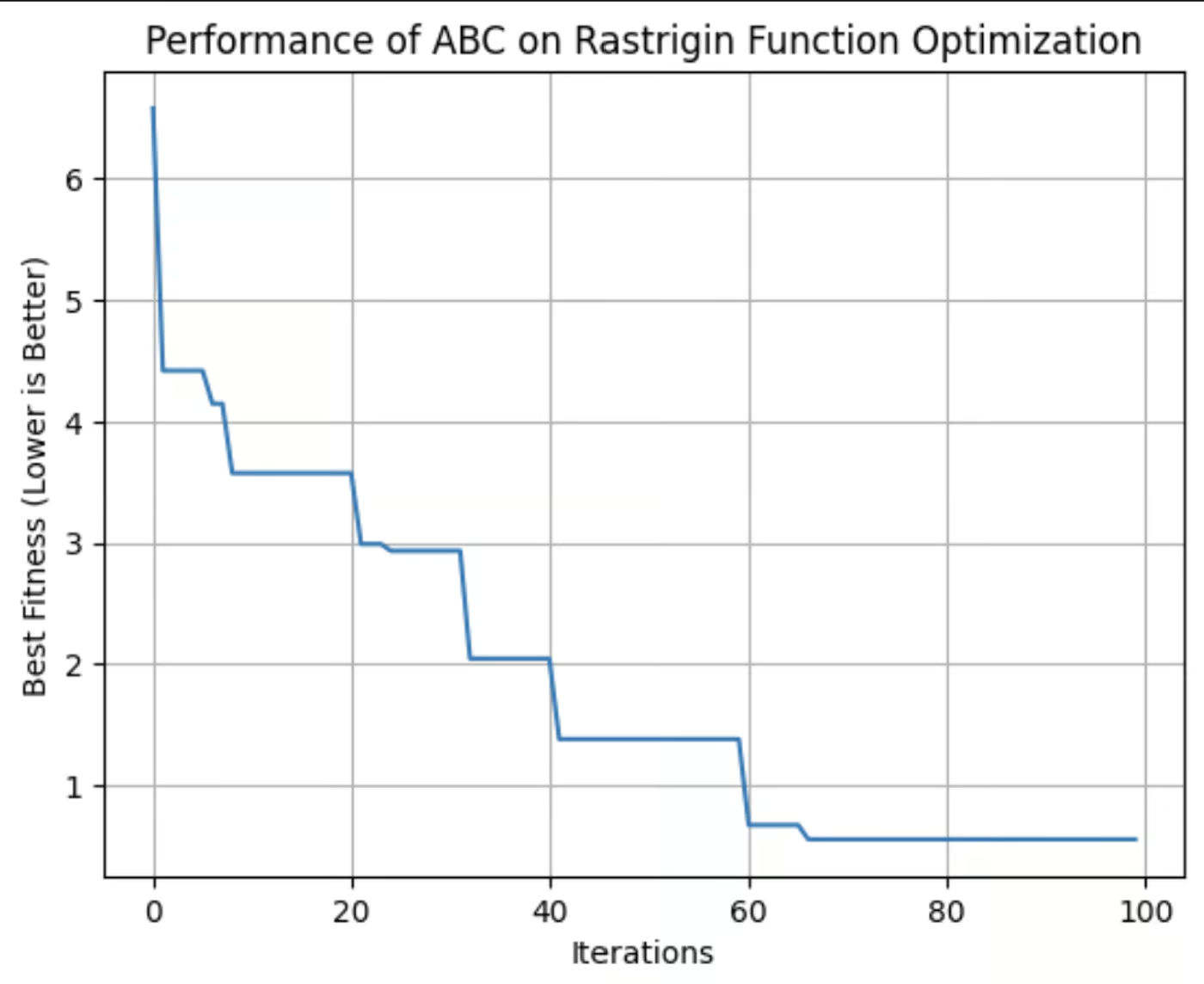

This graph shows the fitness of the best solution found by the ABC algorithm with each iteration. In this run, it reached its optimum fitness around the 64th iteration.

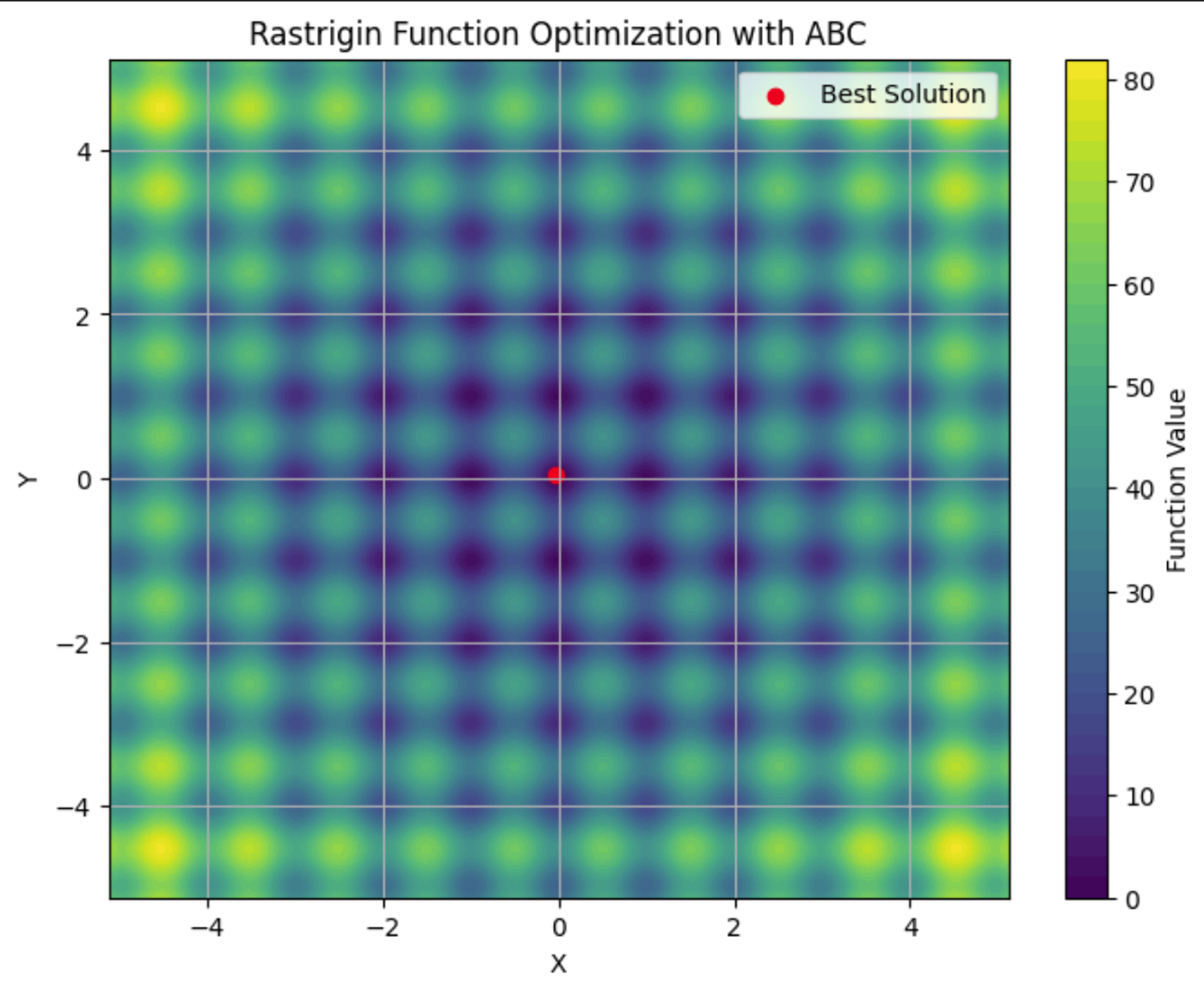

Here you can see the Rastrigin function plotted on a contour plot, with its many local minima. The red dot is the global minima found by the ABC algorithm we ran.

Applications of artificial bee colony

The ABC algorithm is a robust tool for solving optimization problems. Its ability to efficiently explore large and complex search spaces makes it a go-to choice for industries where adaptability and scalability are critical.

These applications include:

- Telecommunications: ABC can be used to optimize the placement of network resources and antennas, maximizing coverage and signal strength while minimizing costs.

- Engineering: ABC can fine-tune parameters in structural design optimization.

- Data Science: ABC can be applied to feature selection, to identify the most important variables in a dataset for machine learning.

ABC is a flexible algorithm suitable for any problem where optimal solutions need to be found in dynamic, high-dimensional environments. Its decentralized nature makes it well-suited for situations where other algorithms may struggle to balance exploration and exploitation efficiently.

Comparing Swarm Intelligence Algorithms

There are multiple swarm intelligence algorithms, each with different attributes. When deciding which to use, it's important to weigh their strengths and weaknesses to decide which best suits your needs.

ACO is effective for combinatorial problems like routing and scheduling but may need significant computational resources. PSO is simpler and excels in continuous optimization, such as hyperparameter tuning, but can struggle with local optima. ABC successfully balances exploration and exploitation, though it requires careful tuning.

Other swarm intelligence algorithms, such as Firefly Algorithm and Cuckoo Search Optimization, also offer unique advantages for specific types of optimization problems.

|

Algorithm |

Strengths |

Weaknesses |

Preferred Libraries |

Best Applications |

|

Ant Colony Optimization (ACO) |

Effective for combinatorial problems and handles complex discrete spaces well |

Computationally intensive and requires fine-tuning |

pyaco |

Routing problems, scheduling, and resource allocation |

|

Particle Swarm Optimization (PSO) |

Good for continuous optimization and simple and easy to implement |

Can converge to local optima and is less effective for discrete problems |

pyswarms |

Hyperparameter tuning, engineering design, financial modeling |

|

Artificial Bee Colony (ABC) |

Adaptable to large, dynamic problems and balanced exploration and exploitation |

Computationally intensive and requires careful parameter tuning |

beecolpy |

Telecommunications, large-scale optimization, and high-dimensional spaces |

|

Firefly Algorithm (FA) |

Excels in multimodal optimization and has strong global search ability |

Sensitive to parameter settings and slower convergence |

fireflyalgorithm |

Image processing, engineering design, and multimodal optimization |

|

Cuckoo Search (CS) |

Efficient for solving optimization problems and has strong exploration capabilities |

May converge prematurely and performance depends on tuning |

cso |

Scheduling, feature selection, and engineering applications |

Challenges and Limitations

Swarm intelligence algorithms, like many machine learning techniques, encounter challenges that can affect their performance. These include:

- Premature convergence: The swarm may settle on a suboptimal solution too quickly.

- Parameter tuning: Achieving optimal results often requires careful adjustment of algorithm settings.

- Computational resources & scalability: These algorithms can be computationally intensive, especially with larger, more complex problems, and their performance might degrade as problem complexity increases.

- Stochastic nature: The inherent randomness in these algorithms can lead to variability in results.

Latest Research and Advancements

A notable trend is the integration of swarm intelligence with other machine learning techniques. Researchers are exploring how swarm algorithms can enhance tasks such as feature selection and hyperparameter optimization. Check out A hybrid particle swarm optimization algorithm for solving engineering problem.

Recent advancements also focus on addressing some of the traditional challenges associated with swarm intelligence, such as premature convergence. New algorithms and techniques are being developed to mitigate the risk of converging on suboptimal solutions. For more information, check out Memory-based approaches for eliminating premature convergence in particle swarm optimization

Scalability is another significant area of research. As problems become increasingly complex and data volumes grow, researchers are working on ways to make swarm intelligence algorithms more scalable and efficient. This includes developing algorithms that can handle large datasets and high-dimensional spaces more effectively, while optimizing computational resources to reduce the time and cost associated with running these algorithms. For more on this, check out Recent Developments in the Theory and Applicability of Swarm Search.

Swarm algorithms are being applied to problems from robotics, to large language models (LLMs), to medical diagnosis. There is ongoing research into whether these algorithms can be useful for helping LLMs strategically forget information to comply with Right to Be Forgotten regulations. And, of course, swarm algorithms have a multitude of applications in data science.

Conclusion

Swarm intelligence offers powerful solutions for optimization problems across various industries. Its principles of decentralization, positive feedback, and adaptation allow it to tackle complex, dynamic tasks that traditional algorithms might struggle with.

Check out this review of the current state of swarm algorithms, “Swarm intelligence: A survey of model classification and applications”.

For a deeper dive into the business applications of AI, check out Artificial Intelligence (AI) Strategy or Artificial Intelligence for Business Leaders. To learn about other algorithms that imitate nature, check out Genetic Algorithm: Complete Guide With Python Implementation.

-

Amazon Nova Today Real Experience and Review -AnalyticsVidhyaAmazonがNovaを発表する:強化されたAIおよびコンテンツ作成のための最先端の基礎モデル Amazonの最近のRe:Invent 2024イベントは、AIとコンテンツの作成に革命をもたらすように設計された、最も高度な基礎モデルのスイートであるNovaを紹介しました。この記事では、Novaの...AI 2025-04-16に投稿されました

Amazon Nova Today Real Experience and Review -AnalyticsVidhyaAmazonがNovaを発表する:強化されたAIおよびコンテンツ作成のための最先端の基礎モデル Amazonの最近のRe:Invent 2024イベントは、AIとコンテンツの作成に革命をもたらすように設計された、最も高度な基礎モデルのスイートであるNovaを紹介しました。この記事では、Novaの...AI 2025-04-16に投稿されました -

ChatGPTタイミングタスク関数を使用する5つの方法ChatGptの新しいスケジュールされたタスク:ai で一日を自動化する ChatGptは最近、ゲームを変える機能:スケジュールされたタスクを導入しました。 これにより、ユーザーはオフライン中であっても、所定の時期に通知または応答を受信して、繰り返しプロンプトを自動化できます。毎日のキュレ...AI 2025-04-16に投稿されました

ChatGPTタイミングタスク関数を使用する5つの方法ChatGptの新しいスケジュールされたタスク:ai で一日を自動化する ChatGptは最近、ゲームを変える機能:スケジュールされたタスクを導入しました。 これにより、ユーザーはオフライン中であっても、所定の時期に通知または応答を受信して、繰り返しプロンプトを自動化できます。毎日のキュレ...AI 2025-04-16に投稿されました -

3つのAIチャットボットのうち、同じプロンプトに応答するのはどれですか?Claude、ChatGpt、Geminiなどのオプションを使用して、チャットボットを選択すると圧倒的に感じることができます。ノイズを切り抜けるために、同一のプロンプトを使用して3つすべてをテストに入れて、どちらが最良の応答を提供するかを確認します。すべてのツールと同様に、出力はそれを使用す...AI 2025-04-15に投稿されました

3つのAIチャットボットのうち、同じプロンプトに応答するのはどれですか?Claude、ChatGpt、Geminiなどのオプションを使用して、チャットボットを選択すると圧倒的に感じることができます。ノイズを切り抜けるために、同一のプロンプトを使用して3つすべてをテストに入れて、どちらが最良の応答を提供するかを確認します。すべてのツールと同様に、出力はそれを使用す...AI 2025-04-15に投稿されました -

chatgptで十分で、専用のAIチャットマシンは必要ありません新しいAIチャットボットが毎日起動している世界では、どちらが正しい「1つ」であるかを決定するのは圧倒的です。しかし、私の経験では、CHATGPTは、プラットフォーム間を切り替える必要なく、私が投げたすべてのものを、少し迅速なエンジニアリングで処理します。 スペシャリストAIチャットボットは、多く...AI 2025-04-14に投稿されました

chatgptで十分で、専用のAIチャットマシンは必要ありません新しいAIチャットボットが毎日起動している世界では、どちらが正しい「1つ」であるかを決定するのは圧倒的です。しかし、私の経験では、CHATGPTは、プラットフォーム間を切り替える必要なく、私が投げたすべてのものを、少し迅速なエンジニアリングで処理します。 スペシャリストAIチャットボットは、多く...AI 2025-04-14に投稿されました -

インドのAIの瞬間:生成AIにおける中国と米国との競争インドのAI野心:2025アップデート 中国と米国が生成AIに多額の投資をしているため、インドは独自のGenaiイニシアチブを加速しています。 インドの多様な言語的および文化的景観に対応する先住民族の大手言語モデル(LLMS)とAIツールの緊急の必要性は否定できません。 この記事では、インドの急...AI 2025-04-13に投稿されました

インドのAIの瞬間:生成AIにおける中国と米国との競争インドのAI野心:2025アップデート 中国と米国が生成AIに多額の投資をしているため、インドは独自のGenaiイニシアチブを加速しています。 インドの多様な言語的および文化的景観に対応する先住民族の大手言語モデル(LLMS)とAIツールの緊急の必要性は否定できません。 この記事では、インドの急...AI 2025-04-13に投稿されました -

気流とDockerを使用してCSVのインポートをPostgreSQLに自動化するこのチュートリアルは、Apache Airflow、Docker、およびPostgreSQLを使用して堅牢なデータパイプラインを構築して、CSVファイルからデータベースへのデータ転送を自動化することを示しています。 効率的なワークフロー管理のために、DAG、タスク、演算子などのコアエアフローの概念...AI 2025-04-12に投稿されました

気流とDockerを使用してCSVのインポートをPostgreSQLに自動化するこのチュートリアルは、Apache Airflow、Docker、およびPostgreSQLを使用して堅牢なデータパイプラインを構築して、CSVファイルからデータベースへのデータ転送を自動化することを示しています。 効率的なワークフロー管理のために、DAG、タスク、演算子などのコアエアフローの概念...AI 2025-04-12に投稿されました -

Swarm Intelligence Algorithms:3つのPython実装Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may...AI 2025-03-24に投稿されました

Swarm Intelligence Algorithms:3つのPython実装Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may...AI 2025-03-24に投稿されました -

ラグ&微調整によりLLMをより正確にする方法Imagine studying a module at university for a semester. At the end, after an intensive learning phase, you take an exam – and you can recall th...AI 2025-03-24に投稿されました

ラグ&微調整によりLLMをより正確にする方法Imagine studying a module at university for a semester. At the end, after an intensive learning phase, you take an exam – and you can recall th...AI 2025-03-24に投稿されました -

Google Geminiとは何ですか? GoogleのChatGptのライバルについて知る必要があるすべてGoogle recently released its new Generative AI model, Gemini. It results from a collaborative effort by a range of teams at Google, including members ...AI 2025-03-23に投稿されました

Google Geminiとは何ですか? GoogleのChatGptのライバルについて知る必要があるすべてGoogle recently released its new Generative AI model, Gemini. It results from a collaborative effort by a range of teams at Google, including members ...AI 2025-03-23に投稿されました -

DSPYでのプロンプトのガイドdspy:LLMアプリケーションを構築および改善するための宣言的なフレームワーク dspy(宣言的自己改善言語プログラム)は、迅速なエンジニアリングの複雑さを抽象化することにより、LLMアプリケーション開発に革命をもたらします。 このチュートリアルは、DSPYの宣言的アプローチを使用して強力な...AI 2025-03-22に投稿されました

DSPYでのプロンプトのガイドdspy:LLMアプリケーションを構築および改善するための宣言的なフレームワーク dspy(宣言的自己改善言語プログラム)は、迅速なエンジニアリングの複雑さを抽象化することにより、LLMアプリケーション開発に革命をもたらします。 このチュートリアルは、DSPYの宣言的アプローチを使用して強力な...AI 2025-03-22に投稿されました -

ブログをTwitterスレッドに自動化しますこの記事では、GoogleのGemini-2.0 LLM、Chromadb、およびRiremlitを使用して、長型コンテンツ(ブログ投稿など)のTwitterスレッドの魅力を自動化することを詳しく説明しています。 手動スレッドの作成には時間がかかります。このアプリケーションはプロセスを合理化します...AI 2025-03-11に投稿されました

ブログをTwitterスレッドに自動化しますこの記事では、GoogleのGemini-2.0 LLM、Chromadb、およびRiremlitを使用して、長型コンテンツ(ブログ投稿など)のTwitterスレッドの魅力を自動化することを詳しく説明しています。 手動スレッドの作成には時間がかかります。このアプリケーションはプロセスを合理化します...AI 2025-03-11に投稿されました -

人工免疫系(AIS):Pythonの例を備えたガイドこの記事では、脅威を特定し、中和する人間の免疫系の顕著な能力に触発された計算モデルである人工免疫システム(AIS)を探ります。 AISのコア原則を掘り下げ、クローン選択、ネガティブ選択、免疫ネットワーク理論などの重要なアルゴリズムを調べ、Pythonコードの例でそれらのアプリケーションを説明します...AI 2025-03-04に投稿されました

人工免疫系(AIS):Pythonの例を備えたガイドこの記事では、脅威を特定し、中和する人間の免疫系の顕著な能力に触発された計算モデルである人工免疫システム(AIS)を探ります。 AISのコア原則を掘り下げ、クローン選択、ネガティブ選択、免疫ネットワーク理論などの重要なアルゴリズムを調べ、Pythonコードの例でそれらのアプリケーションを説明します...AI 2025-03-04に投稿されました -

ChatGPT に自分自身についての楽しい質問をしてみてくださいChatGPT があなたについて何を知っているのか疑問に思ったことはありますか?時間をかけて与えられた情報をどのように処理するのでしょうか?私はさまざまなシナリオで ChatGPT ヒープを使用してきましたが、特定のインタラクションの後にそのヒープが何を言うのかを見るのは常に興味深いものです。&#x...AI 2024 年 11 月 22 日に公開

ChatGPT に自分自身についての楽しい質問をしてみてくださいChatGPT があなたについて何を知っているのか疑問に思ったことはありますか?時間をかけて与えられた情報をどのように処理するのでしょうか?私はさまざまなシナリオで ChatGPT ヒープを使用してきましたが、特定のインタラクションの後にそのヒープが何を言うのかを見るのは常に興味深いものです。&#x...AI 2024 年 11 月 22 日に公開 -

謎の GPT-2 チャットボットをまだ試す方法は次のとおりですAI モデルやチャットボットに興味がある場合は、謎の GPT-2 チャットボットとその有効性に関する議論を見たことがあるかもしれません。ここでは、GPT-2 チャットボットとは何か、およびその方法について説明します。 GPT-2 チャットボットとは何ですか? 2024 年 4 月下旬、gpt2-c...AI 2024 年 11 月 8 日に公開

謎の GPT-2 チャットボットをまだ試す方法は次のとおりですAI モデルやチャットボットに興味がある場合は、謎の GPT-2 チャットボットとその有効性に関する議論を見たことがあるかもしれません。ここでは、GPT-2 チャットボットとは何か、およびその方法について説明します。 GPT-2 チャットボットとは何ですか? 2024 年 4 月下旬、gpt2-c...AI 2024 年 11 月 8 日に公開 -

ChatGPT のキャンバス モードは素晴らしい: 4 つの使用方法ChatGPT の新しい Canvas モードは、世界をリードする生成 AI ツールでの書き込みと編集にさらなる次元を追加しました。私は ChatGPT Canvas の発売以来使用してきましたが、この新しい AI ツールを使用するためのいくつかの異なる方法を見つけました。✕ 広告の削除...AI 2024 年 11 月 8 日に公開

ChatGPT のキャンバス モードは素晴らしい: 4 つの使用方法ChatGPT の新しい Canvas モードは、世界をリードする生成 AI ツールでの書き込みと編集にさらなる次元を追加しました。私は ChatGPT Canvas の発売以来使用してきましたが、この新しい AI ツールを使用するためのいくつかの異なる方法を見つけました。✕ 広告の削除...AI 2024 年 11 月 8 日に公開

中国語を勉強する

- 1 「歩く」は中国語で何と言いますか? 走路 中国語の発音、走路 中国語学習

- 2 「飛行機に乗る」は中国語で何と言いますか? 坐飞机 中国語の発音、坐飞机 中国語学習

- 3 「電車に乗る」は中国語で何と言いますか? 坐火车 中国語の発音、坐火车 中国語学習

- 4 「バスに乗る」は中国語で何と言いますか? 坐车 中国語の発音、坐车 中国語学習

- 5 中国語でドライブは何と言うでしょう? 开车 中国語の発音、开车 中国語学習

- 6 水泳は中国語で何と言うでしょう? 游泳 中国語の発音、游泳 中国語学習

- 7 中国語で自転車に乗るってなんて言うの? 骑自行车 中国語の発音、骑自行车 中国語学習

- 8 中国語で挨拶はなんて言うの? 你好中国語の発音、你好中国語学習

- 9 中国語でありがとうってなんて言うの? 谢谢中国語の発音、谢谢中国語学習

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning