群智能算法:三个Python实现

Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may look like chaos, but there's a hidden order. You can see the same pattern in schools of fish avoiding predators or ants finding the shortest path to food. These creatures rely on simple rules and local communication to tackle surprisingly complex tasks without central control.

That’s the magic of swarm intelligence.

We can replicate this behavior using algorithms that solve tough problems by mimicking swarm intelligence.

Particle Swarm Optimization (PSO)

Particle swarm optimization (PSO) draws its inspiration from the behavior of flocks of birds and schools of fish. In these natural systems, individuals move based on their own previous experiences and their neighbors' positions, gradually adjusting to follow the most successful members of the group. PSO applies this concept to optimization problems, where particles, called agents, move through the search space to find an optimal solution.

Compared to ACO, PSO operates in continuous rather than discrete spaces. In ACO, the focus is on pathfinding and discrete choices, while PSO is better suited for problems involving continuous variables, such as parameter tuning.

In PSO, particles explore a search space. They adjust their positions based on two main factors: their personal best-known position and the best-known position of the entire swarm. This dual feedback mechanism enables them to converge toward the global optimum.

How particle swarm optimization works

The process starts with a swarm of particles initialized randomly across the solution space. Each particle represents a possible solution to the optimization problem. As the particles move, they remember their personal best positions (the best solution they’ve encountered so far) and are attracted toward the global best position (the best solution any particle has found).

This movement is driven by two factors: exploitation and exploration. Exploitation involves refining the search around the current best solution, while exploration encourages particles to search other parts of the solution space to avoid getting stuck in local optima. By balancing these two dynamics, PSO efficiently converges on the best solution.

Particle swarm optimization Python implementation

In financial portfolio management, finding the best way to allocate assets to get the most returns while keeping risks low can be tricky. Let’s use a PSO to find which mix of assets will give us the highest return on investment.

The code below shows how PSO works for optimizing a fictional financial portfolio. It starts with random asset allocations, then tweaks them over several iterations based on what works best, gradually finding the optimal mix of assets for the highest return with the lowest risk.

import numpy as np

import matplotlib.pyplot as plt

# Define the PSO parameters

class Particle:

def __init__(self, n_assets):

# Initialize a particle with random weights and velocities

self.position = np.random.rand(n_assets)

self.position /= np.sum(self.position) # Normalize weights so they sum to 1

self.velocity = np.random.rand(n_assets)

self.best_position = np.copy(self.position)

self.best_score = float('inf') # Start with a very high score

def objective_function(weights, returns, covariance):

"""

Calculate the portfolio's performance.

- weights: Asset weights in the portfolio.

- returns: Expected returns of the assets.

- covariance: Covariance matrix representing risk.

"""

portfolio_return = np.dot(weights, returns) # Calculate the portfolio return

portfolio_risk = np.sqrt(np.dot(weights.T, np.dot(covariance, weights))) # Calculate portfolio risk (standard deviation)

return -portfolio_return / portfolio_risk # We want to maximize return and minimize risk

def update_particles(particles, global_best_position, returns, covariance, w, c1, c2):

"""

Update the position and velocity of each particle.

- particles: List of particle objects.

- global_best_position: Best position found by all particles.

- returns: Expected returns of the assets.

- covariance: Covariance matrix representing risk.

- w: Inertia weight to control particle's previous velocity effect.

- c1: Cognitive coefficient to pull particles towards their own best position.

- c2: Social coefficient to pull particles towards the global best position.

"""

for particle in particles:

# Random coefficients for velocity update

r1, r2 = np.random.rand(len(particle.position)), np.random.rand(len(particle.position))

# Update velocity

particle.velocity = (w * particle.velocity

c1 * r1 * (particle.best_position - particle.position)

c2 * r2 * (global_best_position - particle.position))

# Update position

particle.position = particle.velocity

particle.position = np.clip(particle.position, 0, 1) # Ensure weights are between 0 and 1

particle.position /= np.sum(particle.position) # Normalize weights to sum to 1

# Evaluate the new position

score = objective_function(particle.position, returns, covariance)

if score

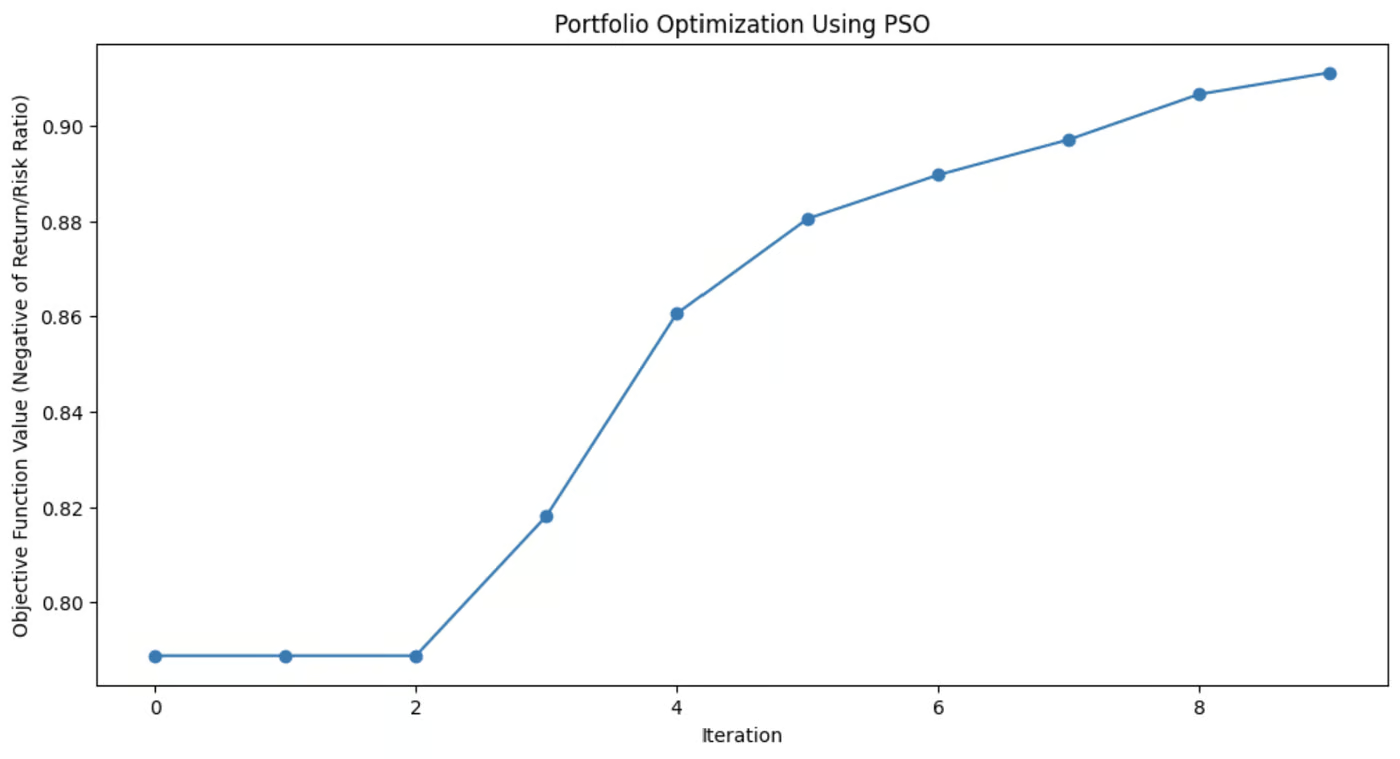

This graph demonstrates how much the PSO algorithm improved the portfolio’s asset mix with each iteration.

Applications of particle swarm optimization

PSO is used for its simplicity and effectiveness in solving various optimization problems, particularly in continuous domains. Its flexibility makes it useful for many real-world scenarios where precise solutions are needed.

These applications include:

- Machine learning: PSO can be applied to tune hyperparameters in machine learning algorithms, helping to find the best model configurations.

- Engineering design: PSO is useful for optimizing design parameters for systems like aerospace components or electrical circuits.

- Financial modeling: In finance, PSO can help in portfolio optimization, minimizing risk while maximizing returns.

PSO's ability to efficiently explore solution spaces makes it applicable across fields, from robotics to energy management to logistics.

Artificial Bee Colony (ABC)

The artificial bee colony (ABC) algorithm is modeled on the foraging behavior of honeybees.

In nature, honeybees efficiently search for nectar sources and share this information with other members of the hive. ABC captures this collaborative search process and applies it to optimization problems, especially those involving complex, high-dimensional spaces.

What sets ABC apart from other swarm intelligence algorithms is its ability to balance exploitation, focusing on refining current solutions, and exploration, searching for new and potentially better solutions. This makes ABC particularly useful for large-scale problems where global optimization is key.

How artificial bee colony works

In the ABC algorithm, the swarm of bees is divided into three specialized roles: employed bees, onlookers, and scouts. Each of these roles mimics a different aspect of how bees search for and exploit food sources in nature.

- Employed bees: These bees are responsible for exploring known food sources, representing current solutions in the optimization problem. They assess the quality (fitness) of these sources and share the information with the rest of the hive.

- Onlooker bees: After gathering information from the employed bees, onlookers select which food sources to explore further. They base their choices on the quality of the solutions shared by the employed bees, focusing more on the better options, thus refining the search for an optimal solution.

- Scout bees: When an employed bee’s food source (solution) becomes exhausted or stagnant (when no improvement is found after a certain number of iterations), the bee becomes a scout. Scouts explore new areas of the solution space, searching for potentially unexplored food sources, thus injecting diversity into the search process.

This dynamic allows ABC to balance the search between intensively exploring promising areas and broadly exploring new areas of the search space. This helps the algorithm avoid getting trapped in local optima and increases its chances of finding a global optimum.

Artificial bee colony Python implementation

The Rastrigin function is a popular problem in optimization, known for its numerous local minima, making it a tough challenge for many algorithms. The goal is simple: find the global minimum.

In this example, we’ll use the artificial bee colony algorithm to tackle this problem. Each bee in the ABC algorithm explores the search space, looking for better solutions to minimize the function. The code simulates bees that explore, exploit, and scout for new areas, ensuring a balance between exploration and exploitation.

import numpy as np

import matplotlib.pyplot as plt

# Rastrigin function: The objective is to minimize this function

def rastrigin(X):

A = 10

return A * len(X) sum([(x ** 2 - A * np.cos(2 * np.pi * x)) for x in X])

# Artificial Bee Colony (ABC) algorithm for continuous optimization of Rastrigin function

def artificial_bee_colony_rastrigin(n_iter=100, n_bees=30, dim=2, bound=(-5.12, 5.12)):

"""

Apply Artificial Bee Colony (ABC) algorithm to minimize the Rastrigin function.

Parameters:

n_iter (int): Number of iterations

n_bees (int): Number of bees in the population

dim (int): Number of dimensions (variables)

bound (tuple): Bounds for the search space (min, max)

Returns:

tuple: Best solution found, best fitness value, and list of best fitness values per iteration

"""

# Initialize the bee population with random solutions within the given bounds

bees = np.random.uniform(bound[0], bound[1], (n_bees, dim))

best_bee = bees[0]

best_fitness = rastrigin(best_bee)

best_fitnesses = []

for iteration in range(n_iter):

# Employed bees phase: Explore new solutions based on the current bees

for i in range(n_bees):

# Generate a new candidate solution by perturbing the current bee's position

new_bee = bees[i] np.random.uniform(-1, 1, dim)

new_bee = np.clip(new_bee, bound[0], bound[1]) # Keep within bounds

# Evaluate the fitness of the new solution

new_fitness = rastrigin(new_bee)

if new_fitness

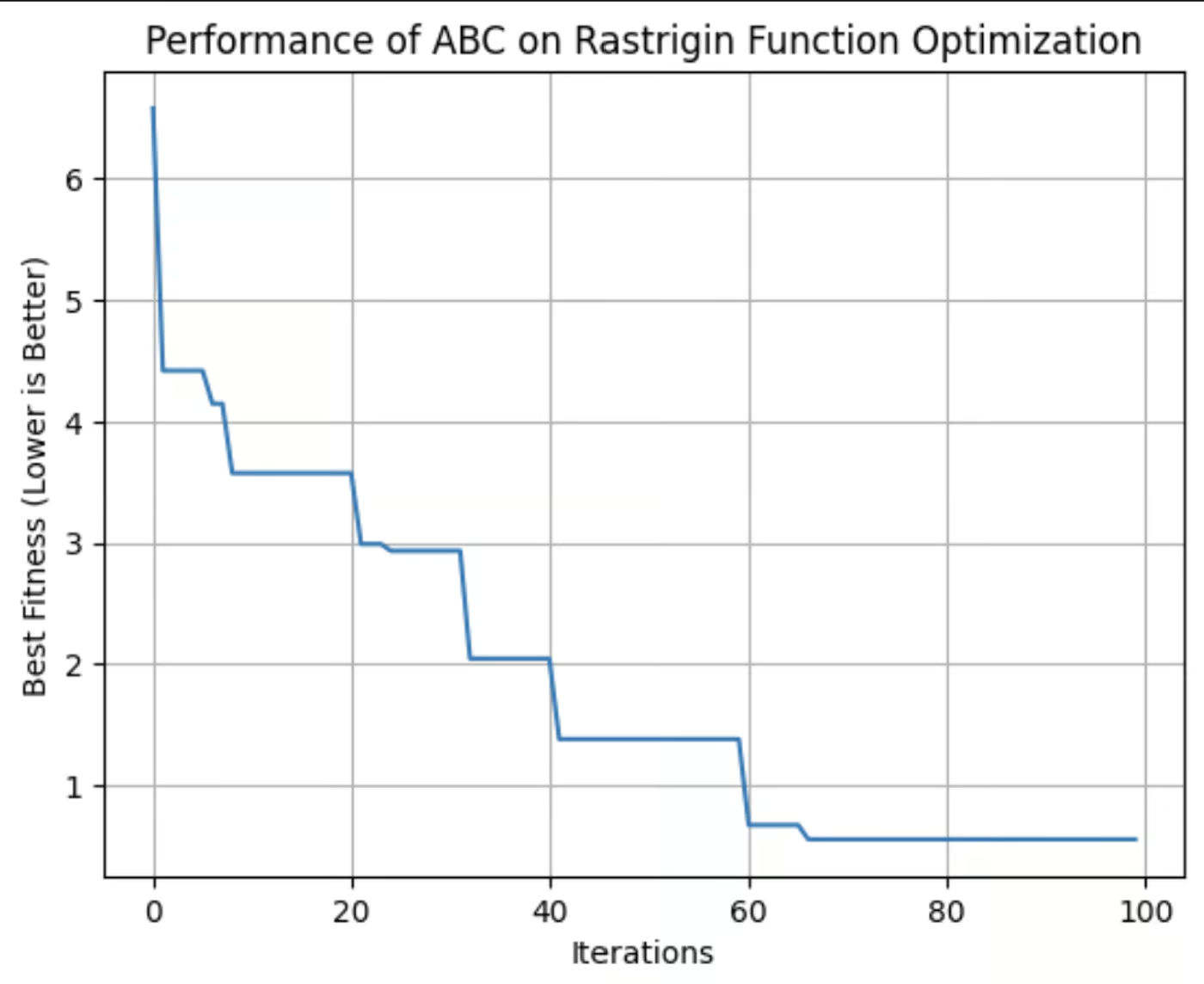

This graph shows the fitness of the best solution found by the ABC algorithm with each iteration. In this run, it reached its optimum fitness around the 64th iteration.

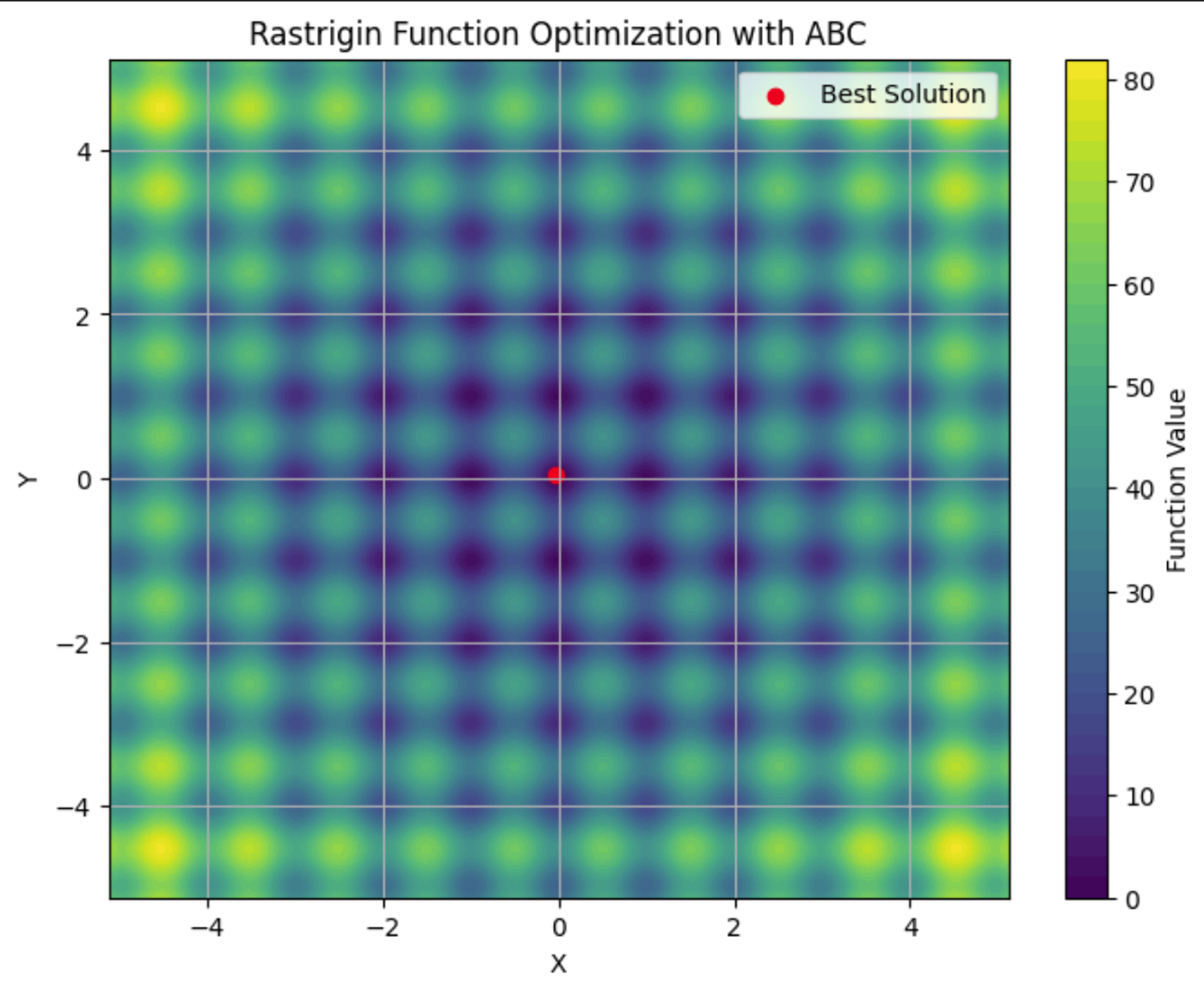

Here you can see the Rastrigin function plotted on a contour plot, with its many local minima. The red dot is the global minima found by the ABC algorithm we ran.

Applications of artificial bee colony

The ABC algorithm is a robust tool for solving optimization problems. Its ability to efficiently explore large and complex search spaces makes it a go-to choice for industries where adaptability and scalability are critical.

These applications include:

- Telecommunications: ABC can be used to optimize the placement of network resources and antennas, maximizing coverage and signal strength while minimizing costs.

- Engineering: ABC can fine-tune parameters in structural design optimization.

- Data Science: ABC can be applied to feature selection, to identify the most important variables in a dataset for machine learning.

ABC is a flexible algorithm suitable for any problem where optimal solutions need to be found in dynamic, high-dimensional environments. Its decentralized nature makes it well-suited for situations where other algorithms may struggle to balance exploration and exploitation efficiently.

Comparing Swarm Intelligence Algorithms

There are multiple swarm intelligence algorithms, each with different attributes. When deciding which to use, it's important to weigh their strengths and weaknesses to decide which best suits your needs.

ACO is effective for combinatorial problems like routing and scheduling but may need significant computational resources. PSO is simpler and excels in continuous optimization, such as hyperparameter tuning, but can struggle with local optima. ABC successfully balances exploration and exploitation, though it requires careful tuning.

Other swarm intelligence algorithms, such as Firefly Algorithm and Cuckoo Search Optimization, also offer unique advantages for specific types of optimization problems.

|

Algorithm |

Strengths |

Weaknesses |

Preferred Libraries |

Best Applications |

|

Ant Colony Optimization (ACO) |

Effective for combinatorial problems and handles complex discrete spaces well |

Computationally intensive and requires fine-tuning |

pyaco |

Routing problems, scheduling, and resource allocation |

|

Particle Swarm Optimization (PSO) |

Good for continuous optimization and simple and easy to implement |

Can converge to local optima and is less effective for discrete problems |

pyswarms |

Hyperparameter tuning, engineering design, financial modeling |

|

Artificial Bee Colony (ABC) |

Adaptable to large, dynamic problems and balanced exploration and exploitation |

Computationally intensive and requires careful parameter tuning |

beecolpy |

Telecommunications, large-scale optimization, and high-dimensional spaces |

|

Firefly Algorithm (FA) |

Excels in multimodal optimization and has strong global search ability |

Sensitive to parameter settings and slower convergence |

fireflyalgorithm |

Image processing, engineering design, and multimodal optimization |

|

Cuckoo Search (CS) |

Efficient for solving optimization problems and has strong exploration capabilities |

May converge prematurely and performance depends on tuning |

cso |

Scheduling, feature selection, and engineering applications |

Challenges and Limitations

Swarm intelligence algorithms, like many machine learning techniques, encounter challenges that can affect their performance. These include:

- Premature convergence: The swarm may settle on a suboptimal solution too quickly.

- Parameter tuning: Achieving optimal results often requires careful adjustment of algorithm settings.

- Computational resources & scalability: These algorithms can be computationally intensive, especially with larger, more complex problems, and their performance might degrade as problem complexity increases.

- Stochastic nature: The inherent randomness in these algorithms can lead to variability in results.

Latest Research and Advancements

A notable trend is the integration of swarm intelligence with other machine learning techniques. Researchers are exploring how swarm algorithms can enhance tasks such as feature selection and hyperparameter optimization. Check out A hybrid particle swarm optimization algorithm for solving engineering problem.

Recent advancements also focus on addressing some of the traditional challenges associated with swarm intelligence, such as premature convergence. New algorithms and techniques are being developed to mitigate the risk of converging on suboptimal solutions. For more information, check out Memory-based approaches for eliminating premature convergence in particle swarm optimization

Scalability is another significant area of research. As problems become increasingly complex and data volumes grow, researchers are working on ways to make swarm intelligence algorithms more scalable and efficient. This includes developing algorithms that can handle large datasets and high-dimensional spaces more effectively, while optimizing computational resources to reduce the time and cost associated with running these algorithms. For more on this, check out Recent Developments in the Theory and Applicability of Swarm Search.

Swarm algorithms are being applied to problems from robotics, to large language models (LLMs), to medical diagnosis. There is ongoing research into whether these algorithms can be useful for helping LLMs strategically forget information to comply with Right to Be Forgotten regulations. And, of course, swarm algorithms have a multitude of applications in data science.

Conclusion

Swarm intelligence offers powerful solutions for optimization problems across various industries. Its principles of decentralization, positive feedback, and adaptation allow it to tackle complex, dynamic tasks that traditional algorithms might struggle with.

Check out this review of the current state of swarm algorithms, “Swarm intelligence: A survey of model classification and applications”.

For a deeper dive into the business applications of AI, check out Artificial Intelligence (AI) Strategy or Artificial Intelligence for Business Leaders. To learn about other algorithms that imitate nature, check out Genetic Algorithm: Complete Guide With Python Implementation.

-

三款AI聊机器人对同一提示的反应,哪个最佳?这是我发现的。在精心策划且详细的提示中扮演着质量良好的提示,在输出的质量中扮演任何cathbot生产的质量。与所有工具一样,输出仅与使用该工具的人的技能一样好。 AI聊天机器人没有什么不同。 有了这种理解,我指示每个模型创建一个针对个人理财的基本指南。这种方法使我能够评估多个相互联系的主题(特别是...人工智能 发布于2025-04-15

三款AI聊机器人对同一提示的反应,哪个最佳?这是我发现的。在精心策划且详细的提示中扮演着质量良好的提示,在输出的质量中扮演任何cathbot生产的质量。与所有工具一样,输出仅与使用该工具的人的技能一样好。 AI聊天机器人没有什么不同。 有了这种理解,我指示每个模型创建一个针对个人理财的基本指南。这种方法使我能够评估多个相互联系的主题(特别是...人工智能 发布于2025-04-15 -

ChatGPT足矣,无需专用AI聊机在一个新的AI聊天机器人每天启动的世界中,决定哪一个是正确的“一个”。但是,以我的经验,chatgpt处理了我所丢下的几乎所有内容,而无需在平台之间切换,只需稍有及时的工程。 在许多实践应用程序中可能会让您感到惊讶。它的范围令人印象深刻,使用户可以生成代码段,草稿求职信,甚至翻译语言。这种多功能性...人工智能 发布于2025-04-14

ChatGPT足矣,无需专用AI聊机在一个新的AI聊天机器人每天启动的世界中,决定哪一个是正确的“一个”。但是,以我的经验,chatgpt处理了我所丢下的几乎所有内容,而无需在平台之间切换,只需稍有及时的工程。 在许多实践应用程序中可能会让您感到惊讶。它的范围令人印象深刻,使用户可以生成代码段,草稿求职信,甚至翻译语言。这种多功能性...人工智能 发布于2025-04-14 -

印度AI时刻:与中美在生成AI领域竞赛印度的AI抱负:2025 Update 与中国和美国在生成AI上进行了大量投资,印度正在加快自己的Genai计划。 不可否认的是,迫切需要迎合印度各种语言和文化景观的土著大语模型(LLM)和AI工具。 本文探讨了印度新兴的Genai生态系统,重点介绍了2025年工会预算,公司参与,技能开发计划...人工智能 发布于2025-04-13

印度AI时刻:与中美在生成AI领域竞赛印度的AI抱负:2025 Update 与中国和美国在生成AI上进行了大量投资,印度正在加快自己的Genai计划。 不可否认的是,迫切需要迎合印度各种语言和文化景观的土著大语模型(LLM)和AI工具。 本文探讨了印度新兴的Genai生态系统,重点介绍了2025年工会预算,公司参与,技能开发计划...人工智能 发布于2025-04-13 -

使用Airflow和Docker自动化CSV到PostgreSQL的导入本教程演示了使用Apache气流,Docker和PostgreSQL构建强大的数据管道,以使数据传输从CSV文件自动化到数据库。 我们将介绍有效工作流程管理的核心气流概念,例如DAG,任务和操作员。 该项目展示了创建可靠的数据管道,该数据管线读取CSV数据并将其写入PostgreSQL数据库。我们...人工智能 发布于2025-04-12

使用Airflow和Docker自动化CSV到PostgreSQL的导入本教程演示了使用Apache气流,Docker和PostgreSQL构建强大的数据管道,以使数据传输从CSV文件自动化到数据库。 我们将介绍有效工作流程管理的核心气流概念,例如DAG,任务和操作员。 该项目展示了创建可靠的数据管道,该数据管线读取CSV数据并将其写入PostgreSQL数据库。我们...人工智能 发布于2025-04-12 -

群智能算法:三个Python实现Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may...人工智能 发布于2025-03-24

群智能算法:三个Python实现Imagine watching a flock of birds in flight. There's no leader, no one giving directions, yet they swoop and glide together in perfect harmony. It may...人工智能 发布于2025-03-24 -

如何通过抹布和微调使LLM更准确Imagine studying a module at university for a semester. At the end, after an intensive learning phase, you take an exam – and you can recall th...人工智能 发布于2025-03-24

如何通过抹布和微调使LLM更准确Imagine studying a module at university for a semester. At the end, after an intensive learning phase, you take an exam – and you can recall th...人工智能 发布于2025-03-24 -

什么是Google Gemini?您需要了解的有关Google Chatgpt竞争对手的一切Google recently released its new Generative AI model, Gemini. It results from a collaborative effort by a range of teams at Google, including members ...人工智能 发布于2025-03-23

什么是Google Gemini?您需要了解的有关Google Chatgpt竞争对手的一切Google recently released its new Generative AI model, Gemini. It results from a collaborative effort by a range of teams at Google, including members ...人工智能 发布于2025-03-23 -

与DSPY提示的指南DSPY(声明性的自我改善语言程序)通过抽象及时工程的复杂性来彻底改变LLM应用程序的开发。 本教程提供了使用DSPY的声明方法来构建强大的AI应用程序的综合指南。 [2 抓取DSPY的声明方法,用于简化LLM应用程序开发。 了解DSPY如何自动化提示工程并优化复杂任务的性能。 探索实用的DS...人工智能 发布于2025-03-22

与DSPY提示的指南DSPY(声明性的自我改善语言程序)通过抽象及时工程的复杂性来彻底改变LLM应用程序的开发。 本教程提供了使用DSPY的声明方法来构建强大的AI应用程序的综合指南。 [2 抓取DSPY的声明方法,用于简化LLM应用程序开发。 了解DSPY如何自动化提示工程并优化复杂任务的性能。 探索实用的DS...人工智能 发布于2025-03-22 -

自动化博客到Twitter线程本文详细介绍了使用Google的Gemini-2.0 LLM,Chromadb和Shiplit自动化长效内容的转换(例如博客文章)。 手动线程创建耗时;此应用程序简化了该过程。 [2 [2 使用Gemini-2.0,Chromadb和Shatlit自动化博客到twitter线程转换。 获得实用的经...人工智能 发布于2025-03-11

自动化博客到Twitter线程本文详细介绍了使用Google的Gemini-2.0 LLM,Chromadb和Shiplit自动化长效内容的转换(例如博客文章)。 手动线程创建耗时;此应用程序简化了该过程。 [2 [2 使用Gemini-2.0,Chromadb和Shatlit自动化博客到twitter线程转换。 获得实用的经...人工智能 发布于2025-03-11 -

人工免疫系统(AIS):python示例的指南本文探讨了人造免疫系统(AIS),这是受人类免疫系统识别和中和威胁的非凡能力启发的计算模型。 我们将深入研究AIS的核心原理,检查诸如克隆选择,负面选择和免疫网络理论之类的关键算法,并用Python代码示例说明其应用。 [2 抗体:识别并结合特定威胁(抗原)。在AIS中,这些代表了问题的潜在解决方...人工智能 发布于2025-03-04

人工免疫系统(AIS):python示例的指南本文探讨了人造免疫系统(AIS),这是受人类免疫系统识别和中和威胁的非凡能力启发的计算模型。 我们将深入研究AIS的核心原理,检查诸如克隆选择,负面选择和免疫网络理论之类的关键算法,并用Python代码示例说明其应用。 [2 抗体:识别并结合特定威胁(抗原)。在AIS中,这些代表了问题的潜在解决方...人工智能 发布于2025-03-04 -

尝试向 ChatGPT 询问这些关于您自己的有趣问题有没有想过 ChatGPT 了解您的哪些信息?随着时间的推移,它如何处理您提供给它的信息?我在不同的场景中使用过 ChatGPT 堆,在特定的交互后看看它会说什么总是很有趣。✕ 删除广告 所以,为什么不尝试向 ChatGPT 询问其中一些问题来看看它对你的真实看法是什么? 我理想生活中的...人工智能 发布于2024-11-22

尝试向 ChatGPT 询问这些关于您自己的有趣问题有没有想过 ChatGPT 了解您的哪些信息?随着时间的推移,它如何处理您提供给它的信息?我在不同的场景中使用过 ChatGPT 堆,在特定的交互后看看它会说什么总是很有趣。✕ 删除广告 所以,为什么不尝试向 ChatGPT 询问其中一些问题来看看它对你的真实看法是什么? 我理想生活中的...人工智能 发布于2024-11-22 -

您仍然可以通过以下方式尝试神秘的 GPT-2 聊天机器人如果您对人工智能模型或聊天机器人感兴趣,您可能已经看过有关神秘的 GPT-2 聊天机器人及其有效性的讨论。在这里,我们解释什么是 GPT-2 聊天机器人以及如何使用访问它。 什么是 GPT-2 聊天机器人? 2024年4月下旬,一个名为gpt2-chatbot的神秘AI模型在LLM测试和基准测试网站...人工智能 发布于2024-11-08

您仍然可以通过以下方式尝试神秘的 GPT-2 聊天机器人如果您对人工智能模型或聊天机器人感兴趣,您可能已经看过有关神秘的 GPT-2 聊天机器人及其有效性的讨论。在这里,我们解释什么是 GPT-2 聊天机器人以及如何使用访问它。 什么是 GPT-2 聊天机器人? 2024年4月下旬,一个名为gpt2-chatbot的神秘AI模型在LLM测试和基准测试网站...人工智能 发布于2024-11-08 -

ChatGPT 的 Canvas 模式很棒:有 4 种使用方法ChatGPT 的新 Canvas 模式为世界领先的生成式 AI 工具中的写作和编辑增添了额外的维度。自 ChatGPT Canvas 推出以来,我一直在使用它,并找到了几种不同的方式来使用这个新的 AI 工具。✕ 删除广告 1 文本编辑 ChatGPT Canvas 是如果你想编辑文本...人工智能 发布于2024-11-08

ChatGPT 的 Canvas 模式很棒:有 4 种使用方法ChatGPT 的新 Canvas 模式为世界领先的生成式 AI 工具中的写作和编辑增添了额外的维度。自 ChatGPT Canvas 推出以来,我一直在使用它,并找到了几种不同的方式来使用这个新的 AI 工具。✕ 删除广告 1 文本编辑 ChatGPT Canvas 是如果你想编辑文本...人工智能 发布于2024-11-08 -

ChatGPT 的自定义 GPT 如何暴露您的数据以及如何保证其安全ChatGPT 的自定义 GPT 功能允许任何人为几乎任何你能想到的东西创建自定义 AI 工具;创意、技术、游戏、定制 GPT 都可以做到。更好的是,您可以与任何人分享您的自定义 GPT 创建。 但是,通过共享您的自定义 GPT,您可能会犯一个代价高昂的错误,将您的数据暴露给全球数千人。 什么...人工智能 发布于2024-11-08

ChatGPT 的自定义 GPT 如何暴露您的数据以及如何保证其安全ChatGPT 的自定义 GPT 功能允许任何人为几乎任何你能想到的东西创建自定义 AI 工具;创意、技术、游戏、定制 GPT 都可以做到。更好的是,您可以与任何人分享您的自定义 GPT 创建。 但是,通过共享您的自定义 GPT,您可能会犯一个代价高昂的错误,将您的数据暴露给全球数千人。 什么...人工智能 发布于2024-11-08 -

ChatGPT 帮助您在 LinkedIn 上找到工作的 10 种方式LinkedIn 个人资料的“关于”部分有 2,600 个可用字符,是阐述您的背景、技能、热情和未来目标的绝佳空间。查看您的 LinkedIn 简历,作为您的专业背景、技能和抱负的简明摘要。 向 ChatGPT 提供您所有获胜品质的列表,或将您的简历复制粘贴到其中。要求聊天机器人使用这些信息撰写...人工智能 发布于2024-11-08

ChatGPT 帮助您在 LinkedIn 上找到工作的 10 种方式LinkedIn 个人资料的“关于”部分有 2,600 个可用字符,是阐述您的背景、技能、热情和未来目标的绝佳空间。查看您的 LinkedIn 简历,作为您的专业背景、技能和抱负的简明摘要。 向 ChatGPT 提供您所有获胜品质的列表,或将您的简历复制粘贴到其中。要求聊天机器人使用这些信息撰写...人工智能 发布于2024-11-08

学习中文

- 1 走路用中文怎么说?走路中文发音,走路中文学习

- 2 坐飞机用中文怎么说?坐飞机中文发音,坐飞机中文学习

- 3 坐火车用中文怎么说?坐火车中文发音,坐火车中文学习

- 4 坐车用中文怎么说?坐车中文发音,坐车中文学习

- 5 开车用中文怎么说?开车中文发音,开车中文学习

- 6 游泳用中文怎么说?游泳中文发音,游泳中文学习

- 7 骑自行车用中文怎么说?骑自行车中文发音,骑自行车中文学习

- 8 你好用中文怎么说?你好中文发音,你好中文学习

- 9 谢谢用中文怎么说?谢谢中文发音,谢谢中文学习

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning