Front page > Programming > Huge Daily Developments for FLUX LoRA Training (Now Even Works on GPU) and More

Front page > Programming > Huge Daily Developments for FLUX LoRA Training (Now Even Works on GPU) and More

Huge Daily Developments for FLUX LoRA Training (Now Even Works on GPU) and More

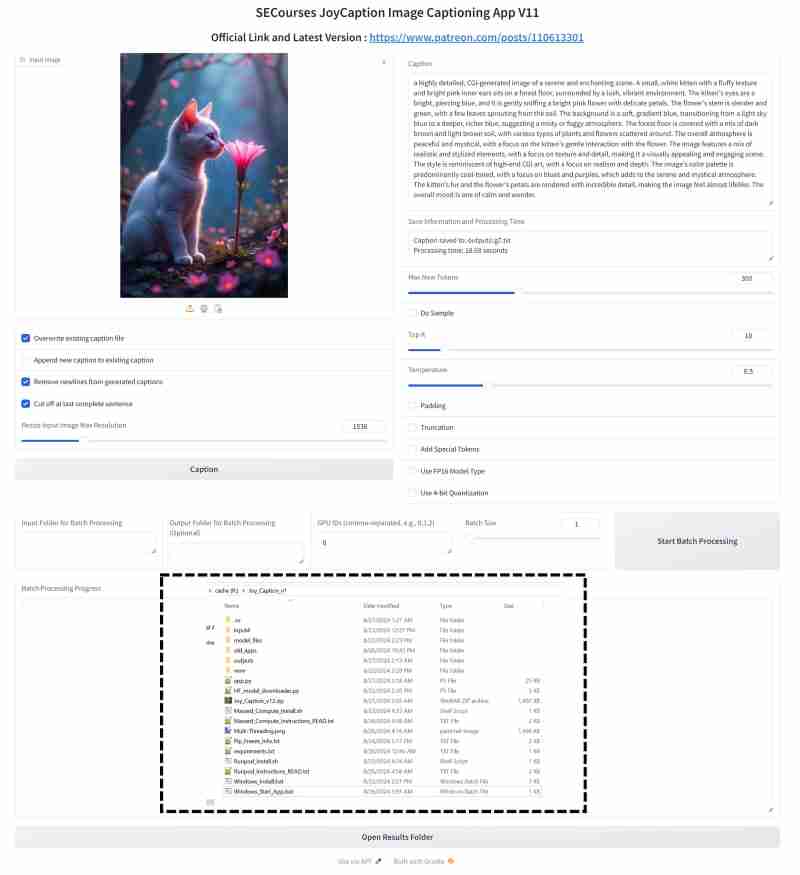

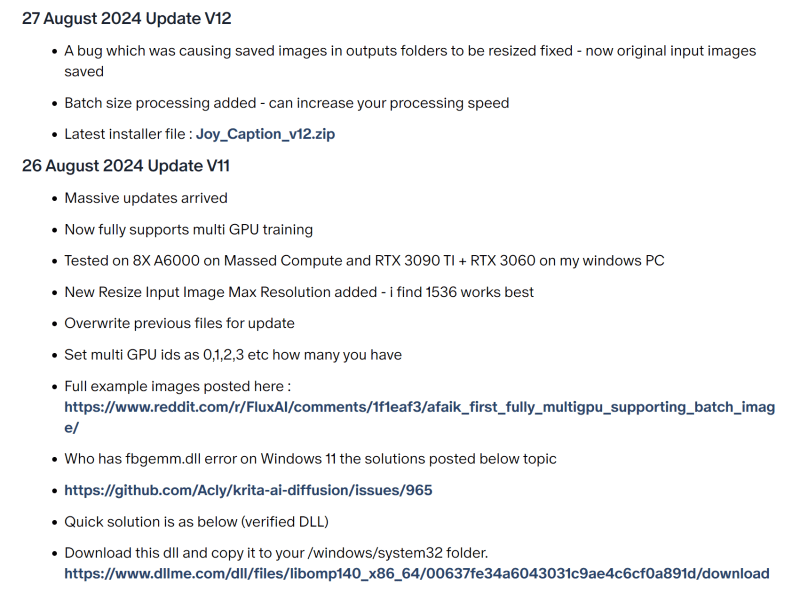

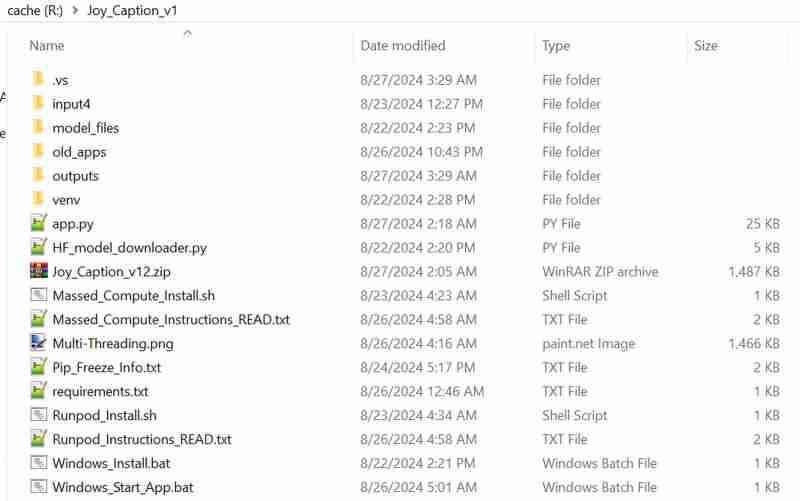

Joycaption now has both multi GPU support and batch size support > https://www.patreon.com/posts/110613301

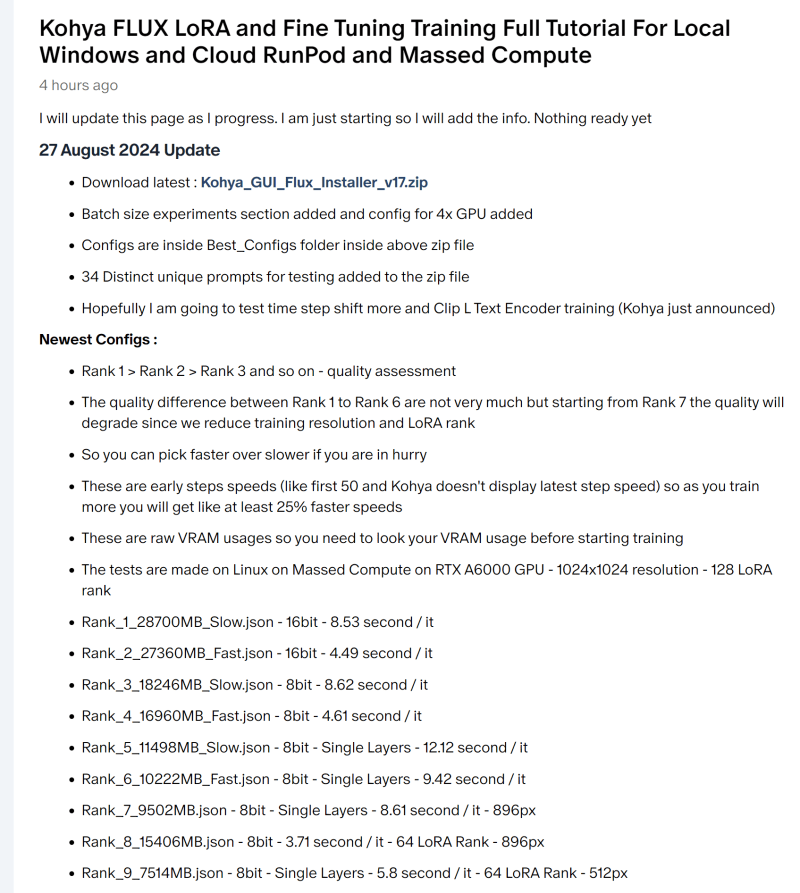

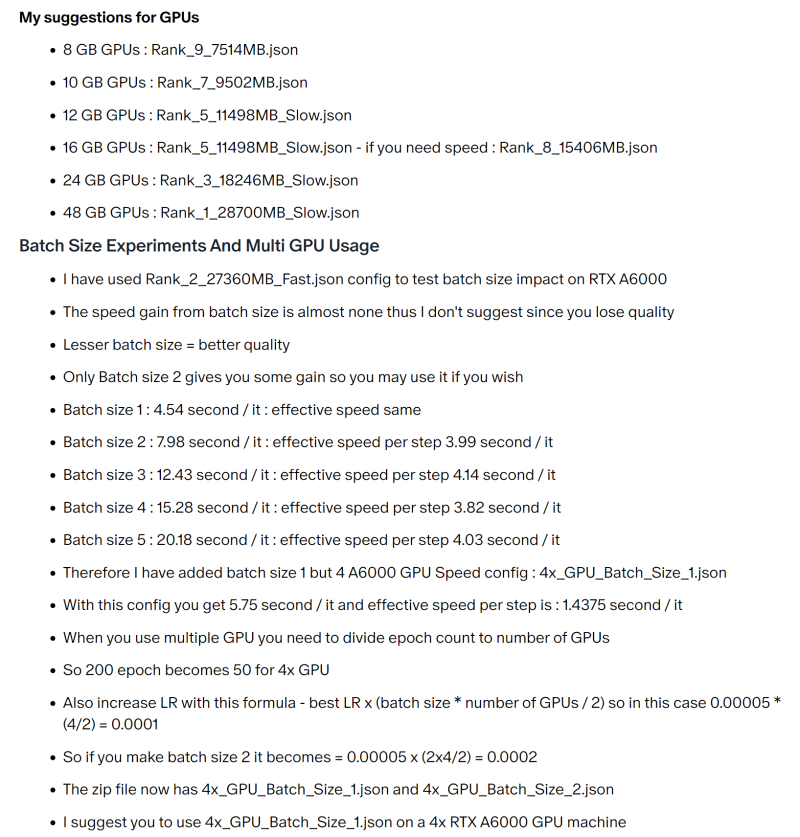

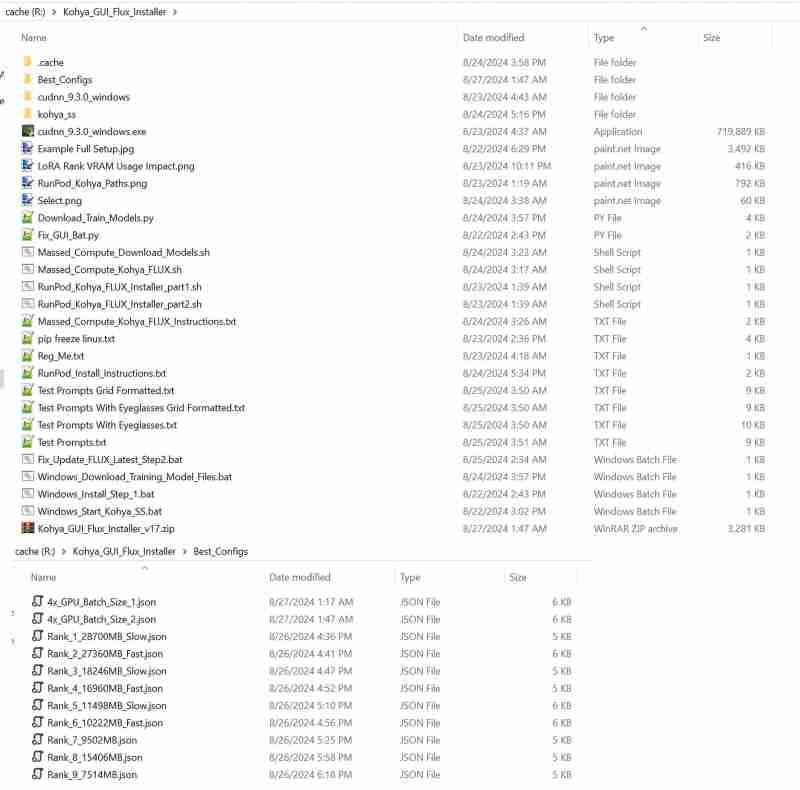

FLUX LoRA training configurations fully updated and now works as low as 8GB GPUs — yes you can train on 8 GB GPU a 12 billion parameter model — very good speed and quality > https://www.patreon.com/posts/110293257

Check out the images to see all details

-

Here are some question-based titles that align with the content of your JavaScript integer verification article: Focusing on reliability and best practices: * How to Reliably Verify Integers in JavaHow to Verify Integer Variables in JavaScript and Raise Errors for Non-Integer ValuesDetermining if a JavaScript variable represents an integer can be...Programming Published on 2024-11-16

Here are some question-based titles that align with the content of your JavaScript integer verification article: Focusing on reliability and best practices: * How to Reliably Verify Integers in JavaHow to Verify Integer Variables in JavaScript and Raise Errors for Non-Integer ValuesDetermining if a JavaScript variable represents an integer can be...Programming Published on 2024-11-16 -

Cool CodePen Demos (October 4)Lightweight Water Distortion Effect Ksenia Kondrashova created a demo with a beautiful shader with a water effect. It looks realistic, like w...Programming Published on 2024-11-16

Cool CodePen Demos (October 4)Lightweight Water Distortion Effect Ksenia Kondrashova created a demo with a beautiful shader with a water effect. It looks realistic, like w...Programming Published on 2024-11-16 -

Beyond `if` Statements: Where Else Can a Type with an Explicit `bool` Conversion Be Used Without Casting?Contextual Conversion to bool Allowed Without a CastYour class defines an explicit conversion to bool, enabling you to use its instance 't' di...Programming Published on 2024-11-16

Beyond `if` Statements: Where Else Can a Type with an Explicit `bool` Conversion Be Used Without Casting?Contextual Conversion to bool Allowed Without a CastYour class defines an explicit conversion to bool, enabling you to use its instance 't' di...Programming Published on 2024-11-16 -

What Happened to Column Offsetting in Bootstrap 4 Beta?Bootstrap 4 Beta: The Removal and Restoration of Column OffsettingBootstrap 4, in its Beta 1 release, introduced significant changes to the way column...Programming Published on 2024-11-16

What Happened to Column Offsetting in Bootstrap 4 Beta?Bootstrap 4 Beta: The Removal and Restoration of Column OffsettingBootstrap 4, in its Beta 1 release, introduced significant changes to the way column...Programming Published on 2024-11-16 -

Using WebSockets in Go for Real-Time CommunicationBuilding apps that require real-time updates—like chat applications, live notifications, or collaborative tools—requires a communication method faster...Programming Published on 2024-11-16

Using WebSockets in Go for Real-Time CommunicationBuilding apps that require real-time updates—like chat applications, live notifications, or collaborative tools—requires a communication method faster...Programming Published on 2024-11-16 -

How To Calculate the Sum of a Value in SQL While Ensuring Each Row is Counted Only Once?Grouping Rows in SQL Using SUM() for Distinct ValuesIn your MySQL query, you want to calculate the sum of a value in the conversions table while ensur...Programming Published on 2024-11-16

How To Calculate the Sum of a Value in SQL While Ensuring Each Row is Counted Only Once?Grouping Rows in SQL Using SUM() for Distinct ValuesIn your MySQL query, you want to calculate the sum of a value in the conversions table while ensur...Programming Published on 2024-11-16 -

How Can I Find Users with Today\'s Birthdays Using MySQL?How to Identify Users with Today's Birthdays Using MySQLDetermining if today is a user's birthday using MySQL involves finding all rows where ...Programming Published on 2024-11-16

How Can I Find Users with Today\'s Birthdays Using MySQL?How to Identify Users with Today's Birthdays Using MySQLDetermining if today is a user's birthday using MySQL involves finding all rows where ...Programming Published on 2024-11-16 -

Handling Environment Variables in ViteIn modern web development, managing sensitive data such as API keys, database credentials, and various configurations for different environments is es...Programming Published on 2024-11-16

Handling Environment Variables in ViteIn modern web development, managing sensitive data such as API keys, database credentials, and various configurations for different environments is es...Programming Published on 2024-11-16 -

How to Efficiently Handle Foreign Key Assignment in Nested Serializers with Django REST Framework?Foreign Key Assignment with Nested Serializers in Django REST FrameworkDjango REST Framework (DRF) provides a convenient way to manage foreign key rel...Programming Published on 2024-11-16

How to Efficiently Handle Foreign Key Assignment in Nested Serializers with Django REST Framework?Foreign Key Assignment with Nested Serializers in Django REST FrameworkDjango REST Framework (DRF) provides a convenient way to manage foreign key rel...Programming Published on 2024-11-16 -

How to Remove "index.php" from CodeIgniter URLs?CodeIgniter .htaccess and URL Rewriting IssuesNavigating CodeIgniter applications often requires removing "index.php" from the URL, allowing...Programming Published on 2024-11-16

How to Remove "index.php" from CodeIgniter URLs?CodeIgniter .htaccess and URL Rewriting IssuesNavigating CodeIgniter applications often requires removing "index.php" from the URL, allowing...Programming Published on 2024-11-16 -

Can You Nest More Than Just `` Elements Inside `` Tags?Uncommon HTML Structures: Can Accommodate Tags Other Than ?In the world of HTML, nesting tags can create complex structures. However, the placement o...Programming Published on 2024-11-16

Can You Nest More Than Just `` Elements Inside `` Tags?Uncommon HTML Structures: Can Accommodate Tags Other Than ?In the world of HTML, nesting tags can create complex structures. However, the placement o...Programming Published on 2024-11-16 -

How to Select Specific Nodes in an XML Document Using XPath Conditions?Utilizing XPath Conditions for Node SelectionWhen navigating an XML document through XPath, it is often necessary to limit the nodes that are retrieve...Programming Published on 2024-11-16

How to Select Specific Nodes in an XML Document Using XPath Conditions?Utilizing XPath Conditions for Node SelectionWhen navigating an XML document through XPath, it is often necessary to limit the nodes that are retrieve...Programming Published on 2024-11-16 -

Why Doesn\'t \"margin: auto\" Work with Absolutely Positioned Elements?Understanding Absolute Positioning Margin Auto IssueWhen applying "position: absolute" to an element with "margin-left: auto" and ...Programming Published on 2024-11-16

Why Doesn\'t \"margin: auto\" Work with Absolutely Positioned Elements?Understanding Absolute Positioning Margin Auto IssueWhen applying "position: absolute" to an element with "margin-left: auto" and ...Programming Published on 2024-11-16 -

How Does Go Handle Pointer and Value Receivers in Methods?Go Pointers: Receiver and Value TypesIn Go, pointers are indispensable for understanding object-oriented programming and memory management. When deali...Programming Published on 2024-11-16

How Does Go Handle Pointer and Value Receivers in Methods?Go Pointers: Receiver and Value TypesIn Go, pointers are indispensable for understanding object-oriented programming and memory management. When deali...Programming Published on 2024-11-16 -

How do I create multiple variables from a list of strings in Python?How can I create multiple variables from a list of strings? [duplicate]Many programming scenarios require us to manipulate multiple objects or variabl...Programming Published on 2024-11-16

How do I create multiple variables from a list of strings in Python?How can I create multiple variables from a list of strings? [duplicate]Many programming scenarios require us to manipulate multiple objects or variabl...Programming Published on 2024-11-16

Study Chinese

- 1 How do you say "walk" in Chinese? 走路 Chinese pronunciation, 走路 Chinese learning

- 2 How do you say "take a plane" in Chinese? 坐飞机 Chinese pronunciation, 坐飞机 Chinese learning

- 3 How do you say "take a train" in Chinese? 坐火车 Chinese pronunciation, 坐火车 Chinese learning

- 4 How do you say "take a bus" in Chinese? 坐车 Chinese pronunciation, 坐车 Chinese learning

- 5 How to say drive in Chinese? 开车 Chinese pronunciation, 开车 Chinese learning

- 6 How do you say swimming in Chinese? 游泳 Chinese pronunciation, 游泳 Chinese learning

- 7 How do you say ride a bicycle in Chinese? 骑自行车 Chinese pronunciation, 骑自行车 Chinese learning

- 8 How do you say hello in Chinese? 你好Chinese pronunciation, 你好Chinese learning

- 9 How do you say thank you in Chinese? 谢谢Chinese pronunciation, 谢谢Chinese learning

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning