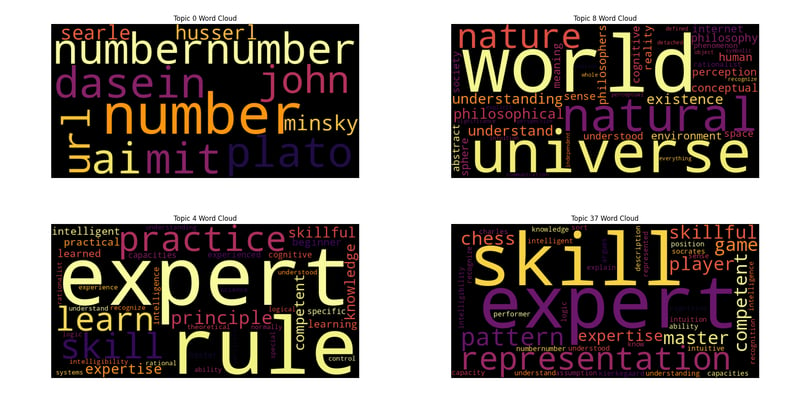

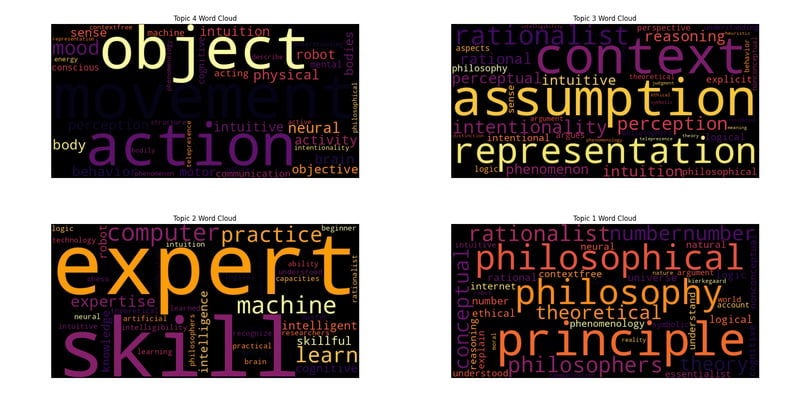

Topc를 사용한 주제 모델링: Dreyfus, AI 및 Wordclouds

2024-07-30에 게시됨

Python을 사용하여 PDF에서 통찰력 추출: 종합 가이드

이 스크립트는 효율적이고 통찰력 있는 분석을 위해 맞춤화된 PDF 처리, 텍스트 추출, 문장 토큰화, 시각화를 통한 주제 모델링 수행을 위한 강력한 워크플로우를 보여줍니다.

라이브러리 개요

- os: 운영 체제와 상호 작용하는 기능을 제공합니다.

- matplotlib.pyplot: Python에서 정적, 애니메이션 및 대화형 시각화를 만드는 데 사용됩니다.

- nltk: 자연어 처리를 위한 라이브러리 및 프로그램 모음인 Natural Language Toolkit.

- pandas: 데이터 조작 및 분석 라이브러리.

- pdftotext: PDF 문서를 일반 텍스트로 변환하기 위한 라이브러리.

- re: 정규식 일치 작업을 제공합니다.

- seaborn: matplotlib를 기반으로 한 통계 데이터 시각화 라이브러리.

- nltk.tokenize.sent_tokenize: 문자열을 문장으로 토큰화하는 NLTK 함수입니다.

- top2vec: 주제 모델링 및 의미 검색을 위한 라이브러리.

- wordcloud: 텍스트 데이터에서 단어 구름을 만들기 위한 라이브러리.

초기 설정

모듈 가져오기

import os import matplotlib.pyplot as plt import nltk import pandas as pd import pdftotext import re import seaborn as sns from nltk.tokenize import sent_tokenize from top2vec import Top2Vec from wordcloud import WordCloud from cleantext import clean

다음으로 punkt 토크나이저가 다운로드되었는지 확인하세요.

nltk.download('punkt')

텍스트 정규화

def normalize_text(text):

"""Normalize text by removing special characters and extra spaces,

and applying various other cleaning options."""

# Apply the clean function with specified parameters

cleaned_text = clean(

text,

fix_unicode=True, # fix various unicode errors

to_ascii=True, # transliterate to closest ASCII representation

lower=True, # lowercase text

no_line_breaks=False, # fully strip line breaks as opposed to only normalizing them

no_urls=True, # replace all URLs with a special token

no_emails=True, # replace all email addresses with a special token

no_phone_numbers=True, # replace all phone numbers with a special token

no_numbers=True, # replace all numbers with a special token

no_digits=True, # replace all digits with a special token

no_currency_symbols=True, # replace all currency symbols with a special token

no_punct=False, # remove punctuations

lang="en", # set to 'de' for German special handling

)

# Further clean the text by removing any remaining special characters except word characters, whitespace, and periods/commas

cleaned_text = re.sub(r"[^\w\s.,]", "", cleaned_text)

# Replace multiple whitespace characters with a single space and strip leading/trailing spaces

cleaned_text = re.sub(r"\s ", " ", cleaned_text).strip()

return cleaned_text

PDF 텍스트 추출

def extract_text_from_pdf(pdf_path):

with open(pdf_path, "rb") as f:

pdf = pdftotext.PDF(f)

all_text = "\n\n".join(pdf)

return normalize_text(all_text)

문장 토큰화

def split_into_sentences(text):

return sent_tokenize(text)

여러 파일 처리

def process_files(file_paths):

authors, titles, all_sentences = [], [], []

for file_path in file_paths:

file_name = os.path.basename(file_path)

parts = file_name.split(" - ", 2)

if len(parts) != 3 or not file_name.endswith(".pdf"):

print(f"Skipping file with incorrect format: {file_name}")

continue

year, author, title = parts

author, title = author.strip(), title.replace(".pdf", "").strip()

try:

text = extract_text_from_pdf(file_path)

except Exception as e:

print(f"Error extracting text from {file_name}: {e}")

continue

sentences = split_into_sentences(text)

authors.append(author)

titles.append(title)

all_sentences.extend(sentences)

print(f"Number of sentences for {file_name}: {len(sentences)}")

return authors, titles, all_sentences

데이터를 CSV로 저장

def save_data_to_csv(authors, titles, file_paths, output_file):

texts = []

for fp in file_paths:

try:

text = extract_text_from_pdf(fp)

sentences = split_into_sentences(text)

texts.append(" ".join(sentences))

except Exception as e:

print(f"Error processing file {fp}: {e}")

texts.append("")

data = pd.DataFrame({

"Author": authors,

"Title": titles,

"Text": texts

})

data.to_csv(output_file, index=False, quoting=1, encoding='utf-8')

print(f"Data has been written to {output_file}")

불용어 로드 중

def load_stopwords(filepath):

with open(filepath, "r") as f:

stopwords = f.read().splitlines()

additional_stopwords = ["able", "according", "act", "actually", "after", "again", "age", "agree", "al", "all", "already", "also", "am", "among", "an", "and", "another", "any", "appropriate", "are", "argue", "as", "at", "avoid", "based", "basic", "basis", "be", "been", "begin", "best", "book", "both", "build", "but", "by", "call", "can", "cant", "case", "cases", "claim", "claims", "class", "clear", "clearly", "cope", "could", "course", "data", "de", "deal", "dec", "did", "do", "doesnt", "done", "dont", "each", "early", "ed", "either", "end", "etc", "even", "ever", "every", "far", "feel", "few", "field", "find", "first", "follow", "follows", "for", "found", "free", "fri", "fully", "get", "had", "hand", "has", "have", "he", "help", "her", "here", "him", "his", "how", "however", "httpsabout", "ibid", "if", "im", "in", "is", "it", "its", "jstor", "june", "large", "lead", "least", "less", "like", "long", "look", "man", "many", "may", "me", "money", "more", "most", "move", "moves", "my", "neither", "net", "never", "new", "no", "nor", "not", "notes", "notion", "now", "of", "on", "once", "one", "ones", "only", "open", "or", "order", "orgterms", "other", "our", "out", "own", "paper", "past", "place", "plan", "play", "point", "pp", "precisely", "press", "put", "rather", "real", "require", "right", "risk", "role", "said", "same", "says", "search", "second", "see", "seem", "seems", "seen", "sees", "set", "shall", "she", "should", "show", "shows", "since", "so", "step", "strange", "style", "such", "suggests", "talk", "tell", "tells", "term", "terms", "than", "that", "the", "their", "them", "then", "there", "therefore", "these", "they", "this", "those", "three", "thus", "to", "todes", "together", "too", "tradition", "trans", "true", "try", "trying", "turn", "turns", "two", "up", "us", "use", "used", "uses", "using", "very", "view", "vol", "was", "way", "ways", "we", "web", "well", "were", "what", "when", "whether", "which", "who", "why", "with", "within", "works", "would", "years", "york", "you", "your", "suggests", "without"]

stopwords.extend(additional_stopwords)

return set(stopwords)

주제에서 불용어 필터링

def filter_stopwords_from_topics(topic_words, stopwords):

filtered_topics = []

for words in topic_words:

filtered_topics.append([word for word in words if word.lower() not in stopwords])

return filtered_topics

워드 클라우드 생성

def generate_wordcloud(topic_words, topic_num, palette='inferno'):

colors = sns.color_palette(palette, n_colors=256).as_hex()

def color_func(word, font_size, position, orientation, random_state=None, **kwargs):

return colors[random_state.randint(0, len(colors) - 1)]

wordcloud = WordCloud(width=800, height=400, background_color='black', color_func=color_func).generate(' '.join(topic_words))

plt.figure(figsize=(10, 5))

plt.imshow(wordcloud, interpolation='bilinear')

plt.axis('off')

plt.title(f'Topic {topic_num} Word Cloud')

plt.show()

주요 실행

file_paths = [f"/home/roomal/Desktop/Dreyfus-Project/Dreyfus/{fname}" for fname in os.listdir("/home/roomal/Desktop/Dreyfus-Project/Dreyfus/") if fname.endswith(".pdf")]

authors, titles, all_sentences = process_files(file_paths)

output_file = "/home/roomal/Desktop/Dreyfus-Project/Dreyfus_Papers.csv"

save_data_to_csv(authors, titles, file_paths, output_file)

stopwords_filepath = "/home/roomal/Documents/Lists/stopwords.txt"

stopwords = load_stopwords(stopwords_filepath)

try:

topic_model = Top2Vec(

all_sentences,

embedding_model="distiluse-base-multilingual-cased",

speed="deep-learn",

workers=6

)

print("Top2Vec model created successfully.")

except ValueError as e:

print(f"Error initializing Top2Vec: {e}")

except Exception as e:

print(f"Unexpected error: {e}")

num_topics = topic_model.get_num_topics()

topic_words, word_scores, topic_nums = topic_model.get_topics(num_topics)

filtered_topic_words = filter_stopwords_from_topics(topic_words, stopwords)

for i, words in enumerate(filtered_topic_words):

print(f"Topic {i}: {', '.join(words)}")

keywords = ["heidegger"]

topic_words, word_scores, topic_scores, topic_nums = topic_model.search_topics(keywords=keywords, num_topics=num_topics)

filtered

_search_topic_words = filter_stopwords_from_topics(topic_words, stopwords)

for i, words in enumerate(filtered_search_topic_words):

generate_wordcloud(words, topic_nums[i])

for i in range(reduced_num_topics):

topic_words = topic_model.topic_words_reduced[i]

filtered_words = [word for word in topic_words if word.lower() not in stopwords]

print(f"Reduced Topic {i}: {', '.join(filtered_words)}")

generate_wordcloud(filtered_words, i)

주제 수를 줄이세요

reduced_num_topics = 5

topic_mapping = topic_model.hierarchical_topic_reduction(num_topics=reduced_num_topics)

# Print reduced topics and generate word clouds

for i in range(reduced_num_topics):

topic_words = topic_model.topic_words_reduced[i]

filtered_words = [word for word in topic_words if word.lower() not in stopwords]

print(f"Reduced Topic {i}: {', '.join(filtered_words)}")

generate_wordcloud(filtered_words, i)

릴리스 선언문

이 기사는 https://dev.to/roomals/topic-modeling-with-top2vec-dreyfus-ai-and-wordclouds-1ggl?1에서 복제됩니다.1 침해 내용이 있는 경우, [email protected]에 연락하여 삭제하시기 바랍니다. 그것

최신 튜토리얼

더>

-

PostgreSQL의 각 고유 식별자에 대한 마지막 행을 효율적으로 검색하는 방법은 무엇입니까?postgresql : 각각의 고유 식별자에 대한 마지막 행을 추출하는 select distinct on (id) id, date, another_info from the_table order by id, date desc; id ...프로그램 작성 2025-03-11에 게시되었습니다

PostgreSQL의 각 고유 식별자에 대한 마지막 행을 효율적으로 검색하는 방법은 무엇입니까?postgresql : 각각의 고유 식별자에 대한 마지막 행을 추출하는 select distinct on (id) id, date, another_info from the_table order by id, date desc; id ...프로그램 작성 2025-03-11에 게시되었습니다 -

순수한 CS로 여러 끈적 끈적한 요소를 서로 쌓을 수 있습니까?순수한 CSS에서 서로 위에 여러 개의 끈적 끈적 요소가 쌓일 수 있습니까? 원하는 동작을 볼 수 있습니다. 여기 : https://webthemez.com/demo/sticky-multi-header-scroll/index.html Java...프로그램 작성 2025-03-11에 게시되었습니다

순수한 CS로 여러 끈적 끈적한 요소를 서로 쌓을 수 있습니까?순수한 CSS에서 서로 위에 여러 개의 끈적 끈적 요소가 쌓일 수 있습니까? 원하는 동작을 볼 수 있습니다. 여기 : https://webthemez.com/demo/sticky-multi-header-scroll/index.html Java...프로그램 작성 2025-03-11에 게시되었습니다 -

JavaScript 객체에서 키를 동적으로 설정하는 방법은 무엇입니까?jsobj = 'example'1; jsObj['key' i] = 'example' 1; 배열은 특수한 유형의 객체입니다. 그것들은 숫자 특성 (인치) + 1의 수를 반영하는 길이 속성을 유지합니다. 이 특별한 동작은 표준 객체에...프로그램 작성 2025-03-11에 게시되었습니다

JavaScript 객체에서 키를 동적으로 설정하는 방법은 무엇입니까?jsobj = 'example'1; jsObj['key' i] = 'example' 1; 배열은 특수한 유형의 객체입니다. 그것들은 숫자 특성 (인치) + 1의 수를 반영하는 길이 속성을 유지합니다. 이 특별한 동작은 표준 객체에...프로그램 작성 2025-03-11에 게시되었습니다 -

익명의 JavaScript 이벤트 처리기를 깨끗하게 제거하는 방법은 무엇입니까?익명 이벤트 리스너를 제거하는 데 익명의 이벤트 리스너 추가 요소를 추가하면 유연성과 단순성을 제공하지만 유연성과 단순성을 제공하지만 제거 할 시간이되면 요소 자체를 교체하지 않고 도전 할 수 있습니다. 요소? element.addeventListene...프로그램 작성 2025-03-11에 게시되었습니다

익명의 JavaScript 이벤트 처리기를 깨끗하게 제거하는 방법은 무엇입니까?익명 이벤트 리스너를 제거하는 데 익명의 이벤트 리스너 추가 요소를 추가하면 유연성과 단순성을 제공하지만 유연성과 단순성을 제공하지만 제거 할 시간이되면 요소 자체를 교체하지 않고 도전 할 수 있습니다. 요소? element.addeventListene...프로그램 작성 2025-03-11에 게시되었습니다 -

전체 HTML 문서에서 특정 요소 유형의 첫 번째 인스턴스를 어떻게 스타일링하려면 어떻게해야합니까?javascript 솔루션 < /h2> : 최초의 유형 문서 전체를 달성합니다 유형의 첫 번째 요소와 일치하는 JavaScript 솔루션이 필요합니다. 문서에서 첫 번째 일치 요소를 선택하고 사용자 정의를 적용 할 수 있습니다. 그런 ...프로그램 작성 2025-03-11에 게시되었습니다

전체 HTML 문서에서 특정 요소 유형의 첫 번째 인스턴스를 어떻게 스타일링하려면 어떻게해야합니까?javascript 솔루션 < /h2> : 최초의 유형 문서 전체를 달성합니다 유형의 첫 번째 요소와 일치하는 JavaScript 솔루션이 필요합니다. 문서에서 첫 번째 일치 요소를 선택하고 사용자 정의를 적용 할 수 있습니다. 그런 ...프로그램 작성 2025-03-11에 게시되었습니다 -

\ "(1) 대 (;;) : 컴파일러 최적화는 성능 차이를 제거합니까? \"대답 : 대부분의 최신 컴파일러에는 (1)과 (;;). 컴파일러 : s-> 7 8 v-> 4를 풀립니다 -e syntax ok gcc : GCC에서 두 루프는 다음과 같이 동일한 어셈블리 코드로 컴파일합니다. . t_while : ...프로그램 작성 2025-03-11에 게시되었습니다

\ "(1) 대 (;;) : 컴파일러 최적화는 성능 차이를 제거합니까? \"대답 : 대부분의 최신 컴파일러에는 (1)과 (;;). 컴파일러 : s-> 7 8 v-> 4를 풀립니다 -e syntax ok gcc : GCC에서 두 루프는 다음과 같이 동일한 어셈블리 코드로 컴파일합니다. . t_while : ...프로그램 작성 2025-03-11에 게시되었습니다 -

Firefox Back 버튼을 사용할 때 JavaScript 실행이 중단되는 이유는 무엇입니까?원인 및 솔루션 : 이 동작은 브라우저 캐싱 자바 스크립트 리소스에 의해 발생합니다. 이 문제를 해결하고 후속 페이지 방문에서 스크립트가 실행되도록하기 위해 Firefox 사용자는 Window.onload 이벤트에서 호출되도록 빈 기능을 설정해야합니다. ...프로그램 작성 2025-03-11에 게시되었습니다

Firefox Back 버튼을 사용할 때 JavaScript 실행이 중단되는 이유는 무엇입니까?원인 및 솔루션 : 이 동작은 브라우저 캐싱 자바 스크립트 리소스에 의해 발생합니다. 이 문제를 해결하고 후속 페이지 방문에서 스크립트가 실행되도록하기 위해 Firefox 사용자는 Window.onload 이벤트에서 호출되도록 빈 기능을 설정해야합니다. ...프로그램 작성 2025-03-11에 게시되었습니다 -

PHP 배열 키-값 이상 : 07 및 08의 호기심 사례 이해이 문제는 PHP의 주요 제로 해석에서 비롯됩니다. 숫자가 0 (예 : 07 또는 08)으로 접두사를 넣으면 PHP는 소수점 값이 아닌 옥탈 값 (기본 8)으로 해석합니다. 설명 : echo 07; // 인쇄 7 (10 월 07 = 10 진수 7) ...프로그램 작성 2025-03-11에 게시되었습니다

PHP 배열 키-값 이상 : 07 및 08의 호기심 사례 이해이 문제는 PHP의 주요 제로 해석에서 비롯됩니다. 숫자가 0 (예 : 07 또는 08)으로 접두사를 넣으면 PHP는 소수점 값이 아닌 옥탈 값 (기본 8)으로 해석합니다. 설명 : echo 07; // 인쇄 7 (10 월 07 = 10 진수 7) ...프로그램 작성 2025-03-11에 게시되었습니다 -

FormData ()로 여러 파일 업로드를 처리하려면 어떻게해야합니까?); 그러나이 코드는 첫 번째 선택된 파일 만 처리합니다. 파일 : var files = document.getElementById ( 'filetOUpload'). 파일; for (var x = 0; x프로그램 작성 2025-03-11에 게시되었습니다

FormData ()로 여러 파일 업로드를 처리하려면 어떻게해야합니까?); 그러나이 코드는 첫 번째 선택된 파일 만 처리합니다. 파일 : var files = document.getElementById ( 'filetOUpload'). 파일; for (var x = 0; x프로그램 작성 2025-03-11에 게시되었습니다 -

MySQL 오류 #1089 : 잘못된 접두사 키를 얻는 이유는 무엇입니까?오류 설명 [#1089- 잘못된 접두사 키 "는 테이블에서 열에 프리픽스 키를 만들려고 시도 할 때 나타날 수 있습니다. 접두사 키는 특정 접두사 길이의 문자열 열 길이를 색인화하도록 설계되었으며, 접두사를 더 빠르게 검색 할 수 있습니...프로그램 작성 2025-03-11에 게시되었습니다

MySQL 오류 #1089 : 잘못된 접두사 키를 얻는 이유는 무엇입니까?오류 설명 [#1089- 잘못된 접두사 키 "는 테이블에서 열에 프리픽스 키를 만들려고 시도 할 때 나타날 수 있습니다. 접두사 키는 특정 접두사 길이의 문자열 열 길이를 색인화하도록 설계되었으며, 접두사를 더 빠르게 검색 할 수 있습니...프로그램 작성 2025-03-11에 게시되었습니다 -

PHP를 사용하여 Blob (이미지)을 MySQL에 올바르게 삽입하는 방법은 무엇입니까?문제 $ sql = "삽입 ImagesTore (imageId, image) 값 ( '$ this- & gt; image_id', 'file_get_contents ($ tmp_image)'; 결과적으로 실제 이...프로그램 작성 2025-03-11에 게시되었습니다

PHP를 사용하여 Blob (이미지)을 MySQL에 올바르게 삽입하는 방법은 무엇입니까?문제 $ sql = "삽입 ImagesTore (imageId, image) 값 ( '$ this- & gt; image_id', 'file_get_contents ($ tmp_image)'; 결과적으로 실제 이...프로그램 작성 2025-03-11에 게시되었습니다 -

동적 인 크기의 부모 요소 내에서 요소의 스크롤 범위를 제한하는 방법은 무엇입니까?문제 : 고정 된 사이드 바로 조정을 유지하면서 사용자의 수직 스크롤과 함께 이동하는 스크롤 가능한 맵 디브가있는 레이아웃을 고려합니다. 그러나 맵의 스크롤은 뷰포트의 높이를 초과하여 사용자가 페이지 바닥 글에 액세스하는 것을 방지합니다. ...프로그램 작성 2025-03-11에 게시되었습니다

동적 인 크기의 부모 요소 내에서 요소의 스크롤 범위를 제한하는 방법은 무엇입니까?문제 : 고정 된 사이드 바로 조정을 유지하면서 사용자의 수직 스크롤과 함께 이동하는 스크롤 가능한 맵 디브가있는 레이아웃을 고려합니다. 그러나 맵의 스크롤은 뷰포트의 높이를 초과하여 사용자가 페이지 바닥 글에 액세스하는 것을 방지합니다. ...프로그램 작성 2025-03-11에 게시되었습니다 -

McRypt에서 OpenSSL로 암호화를 마이그레이션하고 OpenSSL을 사용하여 McRypt 암호화 데이터를 해제 할 수 있습니까?질문 : McRypt에서 OpenSSL로 내 암호화 라이브러리를 업그레이드 할 수 있습니까? 그렇다면 어떻게? 대답 : 대답 : 예, McRypt에서 암호화 라이브러리를 OpenSSL로 업그레이드 할 수 있습니다. OpenSSL을 사용하여 McRyp...프로그램 작성 2025-03-10에 게시되었습니다

McRypt에서 OpenSSL로 암호화를 마이그레이션하고 OpenSSL을 사용하여 McRypt 암호화 데이터를 해제 할 수 있습니까?질문 : McRypt에서 OpenSSL로 내 암호화 라이브러리를 업그레이드 할 수 있습니까? 그렇다면 어떻게? 대답 : 대답 : 예, McRypt에서 암호화 라이브러리를 OpenSSL로 업그레이드 할 수 있습니다. OpenSSL을 사용하여 McRyp...프로그램 작성 2025-03-10에 게시되었습니다 -

Homebrew에서 GO를 설정하면 명령 줄 실행 문제가 발생하는 이유는 무엇입니까?발생하는 문제를 해결하려면 다음을 수행하십시오. 1. 필요한 디렉토리 만들기 mkdir $ home/go mkdir -p $ home/go/src/github.com/user 2. 환경 변수 구성프로그램 작성 2025-03-10에 게시되었습니다

Homebrew에서 GO를 설정하면 명령 줄 실행 문제가 발생하는 이유는 무엇입니까?발생하는 문제를 해결하려면 다음을 수행하십시오. 1. 필요한 디렉토리 만들기 mkdir $ home/go mkdir -p $ home/go/src/github.com/user 2. 환경 변수 구성프로그램 작성 2025-03-10에 게시되었습니다 -

Java는 여러 반환 유형을 허용합니까 : 일반적인 방법을 자세히 살펴보십시오.public 목록 getResult (문자열 s); 여기서 foo는 사용자 정의 클래스입니다. 이 방법 선언은 두 가지 반환 유형을 자랑하는 것처럼 보입니다. 목록과 E. 그러나 이것이 사실인가? 일반 방법 : 미스터리 메소드는 단일...프로그램 작성 2025-03-10에 게시되었습니다

Java는 여러 반환 유형을 허용합니까 : 일반적인 방법을 자세히 살펴보십시오.public 목록 getResult (문자열 s); 여기서 foo는 사용자 정의 클래스입니다. 이 방법 선언은 두 가지 반환 유형을 자랑하는 것처럼 보입니다. 목록과 E. 그러나 이것이 사실인가? 일반 방법 : 미스터리 메소드는 단일...프로그램 작성 2025-03-10에 게시되었습니다

중국어 공부

- 1 "걷다"를 중국어로 어떻게 말하나요? 走路 중국어 발음, 走路 중국어 학습

- 2 "비행기를 타다"를 중국어로 어떻게 말하나요? 坐飞机 중국어 발음, 坐飞机 중국어 학습

- 3 "기차를 타다"를 중국어로 어떻게 말하나요? 坐火车 중국어 발음, 坐火车 중국어 학습

- 4 "버스를 타다"를 중국어로 어떻게 말하나요? 坐车 중국어 발음, 坐车 중국어 학습

- 5 운전을 중국어로 어떻게 말하나요? 开车 중국어 발음, 开车 중국어 학습

- 6 수영을 중국어로 뭐라고 하나요? 游泳 중국어 발음, 游泳 중국어 학습

- 7 자전거를 타다 중국어로 뭐라고 하나요? 骑自行车 중국어 발음, 骑自行车 중국어 학습

- 8 중국어로 안녕하세요를 어떻게 말해요? 你好중국어 발음, 你好중국어 학습

- 9 감사합니다를 중국어로 어떻게 말하나요? 谢谢중국어 발음, 谢谢중국어 학습

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning