Página delantera > Programación > Cómo construir una capa de almacenamiento en caché para su API de Laravel

Página delantera > Programación > Cómo construir una capa de almacenamiento en caché para su API de Laravel

Cómo construir una capa de almacenamiento en caché para su API de Laravel

Let's say you are building an API to serve some data, you discover GET responses are quite slow. You have tried optimizing your queries, indexing your database tables by frequently queried columns and you are still not getting the response times you want. The next step to take is to write a Caching layer for your API. 'Caching layer' here is just a fancy term for a middleware that stores successful responses in a fast to retrieve store. e.g. Redis, Memcached etc. then any further requests to the API checks if the data is available in the store and serves the response.

Prerequisites

- Laravel

- Redis

Before we start

I am assuming if you have gotten here, you know how to create a laravel app. You should also have either a local or cloud Redis instance to connect to. If you have docker locally, you can copy my compose file here. Also, for a guide on how to connect to the Redis cache driver read here.

Creating our Dummy Data

To help us see our caching layer is working as expected. of course we need some data let's say we have a model named Post. so I will be creating some posts, I will also add some complex filtering that could be database intensive and then we can optimize by caching.

Now let's start writing our middleware:

We create our middleware skeleton by running

php artisan make:middleware CacheLayer

Then register it in your app/Http/Kernel.php under the api middleware group like so:

protected $middlewareGroups = [

'api' => [

CacheLayer::class,

],

];

But if you are running Laravel 11. register it in your bootstrap/app.php

->withMiddleware(function (Middleware $middleware) {

$middleware->api(append: [

\App\Http\Middleware\CacheLayer::class,

]);

})

Caching Terminologies

- Cache Hit: occurs when data requested is found in the cache.

- Cache Miss: happens when the requested data is not found in the cache.

- Cache Flush: clearing out the stored data in the cache so that it can be repopulated with fresh data.

- Cache tags: This is a feature unique to Redis. cache tags are a feature used to group related items in the cache, making it easier to manage and invalidate related data simultaneously.

- Time to Live (TTL): this refers to the amount of time a cached object stays valid before it expires. One common misunderstanding is thinking that every time an object is accessed from the cache (a cache hit), its expiry time gets reset. However, this isn't true. For instance, if the TTL is set to 5 minutes, the cached object will expire after 5 minutes, no matter how many times it's accessed within that period. After the 5 minutes are up, the next request for that object will result in a new entry being created in the cache.

Computing a Unique Cache Key

So cache drivers are a key-value store. so you have a key then the value is your json. So you need a unique cache key to identify resources, a unique cache key will also help in cache invalidation i.e. removing cache items when a new resource is created/updated. My approach for cache key generation is to turn the request url, query params, and body into an object. then serialize it to string. Add this to your cache middleware:

class CacheLayer

{

public function handle(Request $request, Closure $next): Response

{

}

private function getCacheKey(Request $request): string

{

$routeParameters = ! empty($request->route()->parameters) ? $request->route()->parameters : [auth()->user()->id];

$allParameters = array_merge($request->all(), $routeParameters);

$this->recursiveSort($allParameters);

return $request->url() . json_encode($allParameters);

}

private function recursiveSort(&$array): void

{

foreach ($array as &$value) {

if (is_array($value)) {

$this->recursiveSort($value);

}

}

ksort($array);

}

}

Let's go through the code line by line.

- First we check for the matched request parameters. we don't want to compute the same cache key for /users/1/posts and /users/2/posts.

- And if there are no matched parameters we pass in the user's id. This part is optional. If you have routes like /user that returns details for the currently authenticated user. it will be suitable to pass in the user id in the cache key. if not you can just make it an empty array([]).

- Then we get all the query parameters and merge it with the request parameters

- Then we sort the parameters, why this sorting step is very important is so we can return same data for let's say /posts?page=1&limit=20 and /posts?limit=20&page=1. so regardless of the order of the parameters we still return the same cache key.

Excluding routes

So depending on the nature of the application you are building. There will be some GET routes that you don't want to cache so for this we create a constant with the regex to match those routes. This will look like:

private const EXCLUDED_URLS = [

'~^api/v1/posts/[0-9a-zA-Z] /comments(\?.*)?$~i'

'

];

In this case, this regex will match all a post's comments.

Configuring TTL

For this, just add this entry to your config/cache.php

'ttl' => now()->addMinutes(5),

Writing our Middleware

Now we have all our preliminary steps set we can write our middleware code:

public function handle(Request $request, Closure $next): Response

{

if ('GET' !== $method) {

return $next($request);

}

foreach (self::EXCLUDED_URLS as $pattern) {

if (preg_match($pattern, $request->getRequestUri())) {

return $next($request);

}

}

$cacheKey = $this->getCacheKey($request);

$exception = null;

$response = cache()

->tags([$request->url()])

->remember(

key: $cacheKey,

ttl: config('cache.ttl'),

callback: function () use ($next, $request, &$exception) {

$res = $next($request);

if (property_exists($res, 'exception') && null !== $res->exception) {

$exception = $res;

return null;

}

return $res;

}

);

return $exception ?? $response;

}

- First we skip caching for non-GET requests and Excluded urls.

- Then we use the cache helper, tag that cache entry by the request url.

- we use the remember method to store that cache entry. then we call the other handlers down the stack by doing $next($request). we check for exceptions. and then either return the exception or response.

Cache Invalidation

When new resources are created/updated, we have to clear the cache, so users can see new data. and to do this we will tweak our middleware code a bit. so in the part where we check the request method we add this:

if ('GET' !== $method) {

$response = $next($request);

if ($response->isSuccessful()) {

$tag = $request->url();

if ('PATCH' === $method || 'DELETE' === $method) {

$tag = mb_substr($tag, 0, mb_strrpos($tag, '/'));

}

cache()->tags([$tag])->flush();

}

return $response;

}

So what this code is doing is flushing the cache for non-GET requests. Then for PATCH and Delete requests we are stripping the {id}. so for example if the request url is PATCH /users/1/posts/2 . We are stripping the last id leaving /users/1/posts. this way when we update a post, we clear the cache of all a users posts. so the user can see fresh data.

Now with this we are done with the CacheLayer implementation. Lets test it

Testing our Cache

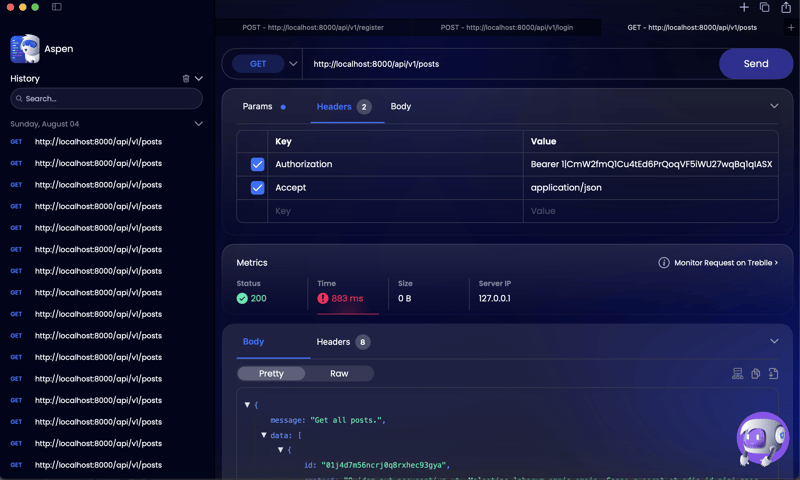

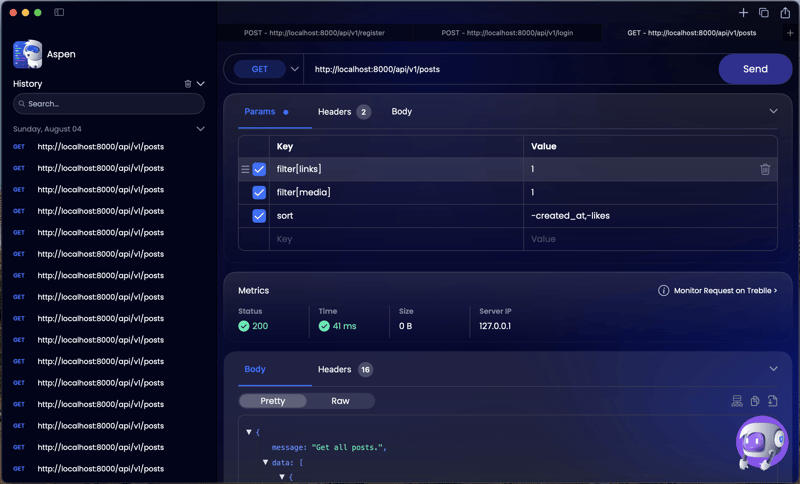

Let's say we want to retrieve all a users posts, that has links, media and sort it by likes and recently created. the url for that kind of request according to the json:api spec will look like: /posts?filter[links]=1&filter[media]=1&sort=-created_at,-likes. on a posts table of 1.2 million records the response time is: ~800ms

and after adding our cache middleware we get a response time of 41ms

Great success!

Optimizations

Another optional step is to compress the json payload we store on redis. JSON is not the most memory-efficient format, so what we can do is use zlib compression to compress the json before storing and decompress before sending to the client.

the code for that will look like:

$response = cache()

->tags([$request->url()])

->remember(

key: $cacheKey,

ttl: config('cache.ttl'),

callback: function () use ($next, $request, &$exception) {

$res = $next($request);

if (property_exists($res, 'exception') && null !== $res->exception) {

$exception = $res;

return null;

}

return gzcompress($res->getContent());

}

);

return $exception ?? response(gzuncompress($response));

The full code for this looks like:

getMethod();

if ('GET' !== $method) {

$response = $next($request);

if ($response->isSuccessful()) {

$tag = $request->url();

if ('PATCH' === $method || 'DELETE' === $method) {

$tag = mb_substr($tag, 0, mb_strrpos($tag, '/'));

}

cache()->tags([$tag])->flush();

}

return $response;

}

foreach (self::EXCLUDED_URLS as $pattern) {

if (preg_match($pattern, $request->getRequestUri())) {

return $next($request);

}

}

$cacheKey = $this->getCacheKey($request);

$exception = null;

$response = cache()

->tags([$request->url()])

->remember(

key: $cacheKey,

ttl: config('cache.ttl'),

callback: function () use ($next, $request, &$exception) {

$res = $next($request);

if (property_exists($res, 'exception') && null !== $res->exception) {

$exception = $res;

return null;

}

return gzcompress($res->getContent());

}

);

return $exception ?? response(gzuncompress($response));

}

private function getCacheKey(Request $request): string

{

$routeParameters = ! empty($request->route()->parameters) ? $request->route()->parameters : [auth()->user()->id];

$allParameters = array_merge($request->all(), $routeParameters);

$this->recursiveSort($allParameters);

return $request->url() . json_encode($allParameters);

}

private function recursiveSort(&$array): void

{

foreach ($array as &$value) {

if (is_array($value)) {

$this->recursiveSort($value);

}

}

ksort($array);

}

}

Summary

This is all I have for you today on caching, Happy building and drop any questions, commments and improvements in the comments!

-

¿Cómo usar correctamente las consultas como los parámetros PDO?usando consultas similares en pdo al intentar implementar una consulta similar en PDO, puede encontrar problemas como el que se describe en la...Programación Publicado el 2025-04-17

¿Cómo usar correctamente las consultas como los parámetros PDO?usando consultas similares en pdo al intentar implementar una consulta similar en PDO, puede encontrar problemas como el que se describe en la...Programación Publicado el 2025-04-17 -

¿Cómo pasar punteros exclusivos como función o parámetros de constructor en C ++?Gestión de punteros únicos como parámetros en constructores y funciones únicos indicadores ( unique_ptr ) para que los principios de la propieda...Programación Publicado el 2025-04-17

¿Cómo pasar punteros exclusivos como función o parámetros de constructor en C ++?Gestión de punteros únicos como parámetros en constructores y funciones únicos indicadores ( unique_ptr ) para que los principios de la propieda...Programación Publicado el 2025-04-17 -

¿Por qué las uniones de la izquierda parecen intraesiones al filtrarse en la cláusula WHERE en la mesa derecha?Left endrum: Horas de brujería cuando se convierte en una unión interna en el ámbito de un mago de la base de datos, realizar recuperaciones de ...Programación Publicado el 2025-04-17

¿Por qué las uniones de la izquierda parecen intraesiones al filtrarse en la cláusula WHERE en la mesa derecha?Left endrum: Horas de brujería cuando se convierte en una unión interna en el ámbito de un mago de la base de datos, realizar recuperaciones de ...Programación Publicado el 2025-04-17 -

¿Cómo lidiar con la memoria en rodajas en la recolección de basura del idioma GO?colección de basura en cortes de Go: un análisis detallado en Go, una porción es una matriz dinámica que hace referencia a una matriz subyacen...Programación Publicado el 2025-04-17

¿Cómo lidiar con la memoria en rodajas en la recolección de basura del idioma GO?colección de basura en cortes de Go: un análisis detallado en Go, una porción es una matriz dinámica que hace referencia a una matriz subyacen...Programación Publicado el 2025-04-17 -

¿Cuáles fueron las restricciones al usar Current_Timestamp con columnas de marca de tiempo en MySQL antes de la versión 5.6.5?en las columnas de la marca de tiempo con cursion_timestamp en predeterminado o en las cláusulas de actualización en las versiones mySql antes de ...Programación Publicado el 2025-04-17

¿Cuáles fueron las restricciones al usar Current_Timestamp con columnas de marca de tiempo en MySQL antes de la versión 5.6.5?en las columnas de la marca de tiempo con cursion_timestamp en predeterminado o en las cláusulas de actualización en las versiones mySql antes de ...Programación Publicado el 2025-04-17 -

¿Cómo repetir eficientemente los caracteres de cadena para la sangría en C#?repitiendo una cadena para la indentación al sangrar una cadena basada en la profundidad de un elemento, es conveniente tener una forma eficie...Programación Publicado el 2025-04-17

¿Cómo repetir eficientemente los caracteres de cadena para la sangría en C#?repitiendo una cadena para la indentación al sangrar una cadena basada en la profundidad de un elemento, es conveniente tener una forma eficie...Programación Publicado el 2025-04-17 -

El papel y el uso de las instantáneas de Maven en la integración continuarevelador de instantáneas maven: la herramienta de un desarrollador para la integración continua en el mundo del desarrollo de software, Maven...Programación Publicado el 2025-04-17

El papel y el uso de las instantáneas de Maven en la integración continuarevelador de instantáneas maven: la herramienta de un desarrollador para la integración continua en el mundo del desarrollo de software, Maven...Programación Publicado el 2025-04-17 -

¿Cómo identificar y eliminar las directivas redundantes #Clude en grandes proyectos de C ++?Identificación de directivas redundantes #include en extensos proyectos C con grandes proyectos C, los desarrolladores a menudo encuentran dema...Programación Publicado el 2025-04-17

¿Cómo identificar y eliminar las directivas redundantes #Clude en grandes proyectos de C ++?Identificación de directivas redundantes #include en extensos proyectos C con grandes proyectos C, los desarrolladores a menudo encuentran dema...Programación Publicado el 2025-04-17 -

Python Metaclass Principio de trabajo y creación y personalización de clases¿Qué son los metaclasses en Python? MetAclasses son responsables de crear objetos de clase en Python. Así como las clases crean instancias, las ...Programación Publicado el 2025-04-17

Python Metaclass Principio de trabajo y creación y personalización de clases¿Qué son los metaclasses en Python? MetAclasses son responsables de crear objetos de clase en Python. Así como las clases crean instancias, las ...Programación Publicado el 2025-04-17 -

Resolver el error MySQL 1153: el paquete excede el límite 'max_allowed_packet'MySql Error 1153: la solución de problemas obtuvo un paquete más grande que 'max_allowed_packet' bytes frente al error enigmático mysq...Programación Publicado el 2025-04-17

Resolver el error MySQL 1153: el paquete excede el límite 'max_allowed_packet'MySql Error 1153: la solución de problemas obtuvo un paquete más grande que 'max_allowed_packet' bytes frente al error enigmático mysq...Programación Publicado el 2025-04-17 -

Por qué HTML no puede imprimir números y soluciones de páginano puedo imprimir números de página en las páginas html? Descripción del problema: a pesar de investigar extensamente, los números de página ...Programación Publicado el 2025-04-17

Por qué HTML no puede imprimir números y soluciones de páginano puedo imprimir números de página en las páginas html? Descripción del problema: a pesar de investigar extensamente, los números de página ...Programación Publicado el 2025-04-17 -

¿Cómo analizar los números en notación exponencial usando decimal.parse ()?analizando un número de la notación exponencial cuando intenta analizar una cadena expresada en notación exponencial usando decimal.parse (&qu...Programación Publicado el 2025-04-17

¿Cómo analizar los números en notación exponencial usando decimal.parse ()?analizando un número de la notación exponencial cuando intenta analizar una cadena expresada en notación exponencial usando decimal.parse (&qu...Programación Publicado el 2025-04-17 -

¿Cómo puedo iterar e imprimir sincrónicamente los valores de dos matrices de igual tamaño en PHP?iterando e imprimiendo los valores de dos matrices del mismo tamaño cuando se crea un Selectbox usando dos matrices de igual tamaño, uno que con...Programación Publicado el 2025-04-17

¿Cómo puedo iterar e imprimir sincrónicamente los valores de dos matrices de igual tamaño en PHP?iterando e imprimiendo los valores de dos matrices del mismo tamaño cuando se crea un Selectbox usando dos matrices de igual tamaño, uno que con...Programación Publicado el 2025-04-17 -

¿Cómo los map.entry de Java y simplificando la gestión de pares de valores clave?una colección integral para pares de valor: Introducción de Java Map.entry y SimpleEntry en Java, al definir una colección donde cada elemento...Programación Publicado el 2025-04-17

¿Cómo los map.entry de Java y simplificando la gestión de pares de valores clave?una colección integral para pares de valor: Introducción de Java Map.entry y SimpleEntry en Java, al definir una colección donde cada elemento...Programación Publicado el 2025-04-17 -

¿Por qué mi código GO lanza un error de desbordamiento constante cuando se usa operaciones bitwise y constantes sin tipo?comprensión de desbordamientos constantes en Go El lenguaje de programación GO ofrece un sistema de tipo integral, incluido el concepto de con...Programación Publicado el 2025-04-17

¿Por qué mi código GO lanza un error de desbordamiento constante cuando se usa operaciones bitwise y constantes sin tipo?comprensión de desbordamientos constantes en Go El lenguaje de programación GO ofrece un sistema de tipo integral, incluido el concepto de con...Programación Publicado el 2025-04-17

Estudiar chino

- 1 ¿Cómo se dice "caminar" en chino? 走路 pronunciación china, 走路 aprendizaje chino

- 2 ¿Cómo se dice "tomar un avión" en chino? 坐飞机 pronunciación china, 坐飞机 aprendizaje chino

- 3 ¿Cómo se dice "tomar un tren" en chino? 坐火车 pronunciación china, 坐火车 aprendizaje chino

- 4 ¿Cómo se dice "tomar un autobús" en chino? 坐车 pronunciación china, 坐车 aprendizaje chino

- 5 ¿Cómo se dice conducir en chino? 开车 pronunciación china, 开车 aprendizaje chino

- 6 ¿Cómo se dice nadar en chino? 游泳 pronunciación china, 游泳 aprendizaje chino

- 7 ¿Cómo se dice andar en bicicleta en chino? 骑自行车 pronunciación china, 骑自行车 aprendizaje chino

- 8 ¿Cómo se dice hola en chino? 你好Pronunciación china, 你好Aprendizaje chino

- 9 ¿Cómo se dice gracias en chino? 谢谢Pronunciación china, 谢谢Aprendizaje chino

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning