A Deep Dive into CNCF’s Cloud-Native AI Whitepaper

During KubeCon EU 2024, CNCF launched its first Cloud-Native AI Whitepaper. This article provides an in-depth analysis of the content of this whitepaper.

In March 2024, during KubeCon EU, the Cloud-Native Computing Foundation (CNCF) released its first detailed whitepaper on Cloud-Native Artificial Intelligence (CNAI) 1. This report extensively explores the current state, challenges, and future development directions of integrating cloud-native technologies with artificial intelligence. This article will delve into the core content of this whitepaper.

This article is first published in the medium MPP plan. If you are a medium user, please follow me in medium. Thank you very much.

What is Cloud-Native AI?

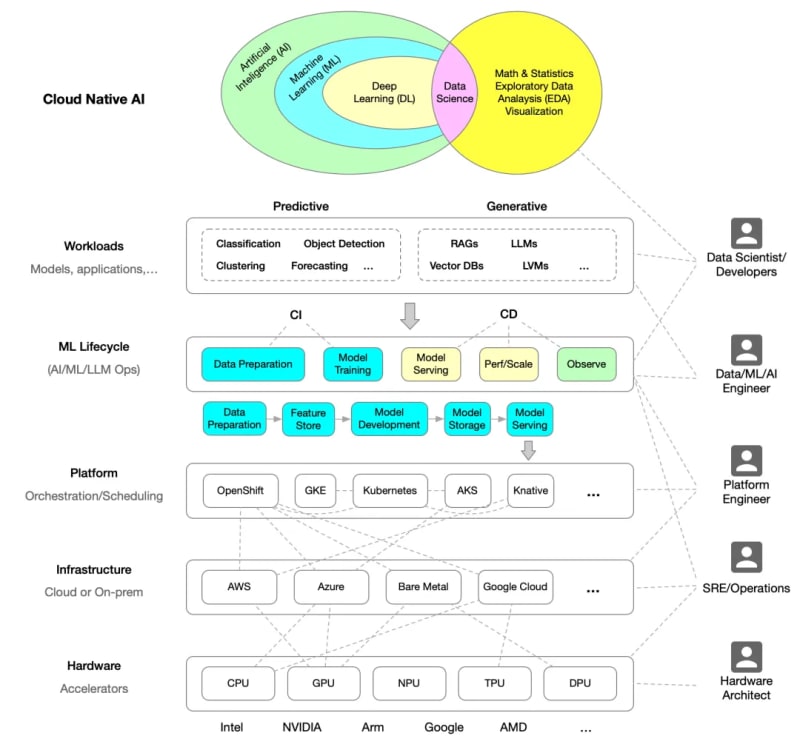

Cloud-Native AI refers to building and deploying artificial intelligence applications and workloads using cloud-native technology principles. This includes leveraging microservices, containerization, declarative APIs, and continuous integration/continuous deployment (CI/CD) among other cloud-native technologies to enhance AI applications’ scalability, reusability, and operability.

The following diagram illustrates the architecture of Cloud-Native AI, redrawn based on the whitepaper.

Relationship between Cloud-Native AI and Cloud-Native Technologies

Cloud-native technologies provide a flexible, scalable platform that makes the development and operation of AI applications more efficient. Through containerization and microservices architecture, developers can iterate and deploy AI models quickly while ensuring high availability and scalability of the system. Kuuch as resource scheduling, automatic scaling, and service discovery.

The whitepaper provides two examples to illustrate the relationship between Cloud-Native AI and cloud-native technologies, namely running AI on cloud-native infrastructure:

- Hugging Face Collaborates with Microsoft to launch Hugging Face Model Catalog on Azure2

- OpenAI Scaling Kubernetes to 7,500 nodes3

Challenges of Cloud-Native AI

Despite providing a solid foundation for AI applications, there are still challenges when integrating AI workloads with cloud-native platforms. These challenges include data preparation complexity, model training resource requirements, and maintaining model security and isolation in multi-tenant environments. Additionally, resource management and scheduling in cloud-native environments are crucial for large-scale AI applications and need further optimization to support efficient model training and inference.

Development Path of Cloud-Native AI

The whitepaper proposes several development paths for Cloud-Native AI, including improving resource scheduling algorithms to better support AI workloads, developing new service mesh technologies to enhance the performance and security of AI applications, and promoting innovation and standardization of Cloud-Native AI technology through open-source projects and community collaboration.

Cloud-Native AI Technology Landscape

Cloud-Native AI involves various technologies, ranging from containers and microservices to service mesh and serverless computing. Kubernetes plays a central role in deploying and managing AI applications, while service mesh technologies such as Istio and Envoy provide robust traffic management and security features. Additionally, monitoring tools like Prometheus and Grafana are crucial for maintaining the performance and reliability of AI applications.

Below is the Cloud-Native AI landscape diagram provided in the whitepaper.

- Kubernetes

- Volcano

- Armada

- Kuberay

- Nvidia NeMo

- Yunikorn

- Kueue

- Flame

Distributed Training

- Kubeflow Training Operator

- Pytorch DDP

- TensorFlow Distributed

- Open MPI

- DeepSpeed

- Megatron

- Horovod

- Apla

- …

ML Serving

- Kserve

- Seldon

- VLLM

- TGT

- Skypilot

- …

CI/CD — Delivery

- Kubeflow Pipelines

- Mlflow

- TFX

- BentoML

- MLRun

- …

Data Science

- Jupyter

- Kubeflow Notebooks

- PyTorch

- TensorFlow

- Apache Zeppelin

Workload Observability

- Prometheus

- Influxdb

- Grafana

- Weights and Biases (wandb)

- OpenTelemetry

- …

AutoML

- Hyperopt

- Optuna

- Kubeflow Katib

- NNI

- …

Governance & Policy

- Kyverno

- Kyverno-JSON

- OPA/Gatekeeper

- StackRox Minder

- …

Data Architecture

- ClickHouse

- Apache Pinot

- Apache Druid

- Cassandra

- ScyllaDB

- Hadoop HDFS

- Apache HBase

- Presto

- Trino

- Apache Spark

- Apache Flink

- Kafka

- Pulsar

- Fluid

- Memcached

- Redis

- Alluxio

- Apache Superset

- …

Vector Databases

- Chroma

- Weaviate

- Quadrant

- Pinecone

- Extensions

- Redis

- Postgres SQL

- ElasticSearch

- …

Model/LLM Observability

- • Trulens

- Langfuse

- Deepchecks

- OpenLLMetry

- …

Conclusion

Finally, the following key points are summarized:

- Role of Open Source Community : The whitepaper indicates the role of the open-source community in advancing Cloud-Native AI, including accelerating innovation and reducing costs through open-source projects and extensive collaboration.

- Importance of Cloud-Native Technologies : Cloud-Native AI, built according to cloud-native principles, emphasizes the importance of repeatability and scalability. Cloud-native technologies provide an efficient development and operation environment for AI applications, especially in resource scheduling and service scalability.

- Existing Challenges : Despite bringing many advantages, Cloud-Native AI still faces challenges in data preparation, model training resource requirements, and model security and isolation.

- Future Development Directions : The whitepaper proposes development paths including optimizing resource scheduling algorithms to support AI workloads, developing new service mesh technologies to enhance performance and security, and promoting technology innovation and standardization through open-source projects and community collaboration.

- Key Technological Components : Key technologies involved in Cloud-Native AI include containers, microservices, service mesh, and serverless computing, among others. Kubernetes plays a central role in deploying and managing AI applications, while service mesh technologies like Istio and Envoy provide necessary traffic management and security.

For more details, please download the Cloud-Native AI whitepaper 4.

Reference Links

Whitepaper: ↩︎

Hugging Face Collaborates with Microsoft to launch Hugging Face Model Catalog on Azure ↩︎

OpenAI Scaling Kubernetes to 7,500 nodes: ↩︎

Cloud-Native AI Whitepaper: ↩︎

-

How to Combine Data from Three MySQL Tables into a New Table?mySQL: Creating a New Table from Data and Columns of Three TablesQuestion:How can I create a new table that combines selected data from three existing...Programming Posted on 2025-04-08

How to Combine Data from Three MySQL Tables into a New Table?mySQL: Creating a New Table from Data and Columns of Three TablesQuestion:How can I create a new table that combines selected data from three existing...Programming Posted on 2025-04-08 -

How to Send a Raw POST Request with cURL in PHP?How to Send a Raw POST Request Using cURL in PHPIn PHP, cURL is a popular library for sending HTTP requests. This article will demonstrate how to use ...Programming Posted on 2025-04-08

How to Send a Raw POST Request with cURL in PHP?How to Send a Raw POST Request Using cURL in PHPIn PHP, cURL is a popular library for sending HTTP requests. This article will demonstrate how to use ...Programming Posted on 2025-04-08 -

How to upload files with additional parameters using java.net.URLConnection and multipart/form-data encoding?Uploading Files with HTTP RequestsTo upload files to an HTTP server while also submitting additional parameters, java.net.URLConnection and multipart/...Programming Posted on 2025-04-08

How to upload files with additional parameters using java.net.URLConnection and multipart/form-data encoding?Uploading Files with HTTP RequestsTo upload files to an HTTP server while also submitting additional parameters, java.net.URLConnection and multipart/...Programming Posted on 2025-04-08 -

How to Check if an Object Has a Specific Attribute in Python?Method to Determine Object Attribute ExistenceThis inquiry seeks a method to verify the presence of a specific attribute within an object. Consider th...Programming Posted on 2025-04-08

How to Check if an Object Has a Specific Attribute in Python?Method to Determine Object Attribute ExistenceThis inquiry seeks a method to verify the presence of a specific attribute within an object. Consider th...Programming Posted on 2025-04-08 -

How to Simplify JSON Parsing in PHP for Multi-Dimensional Arrays?Parsing JSON with PHPTrying to parse JSON data in PHP can be challenging, especially when dealing with multi-dimensional arrays. To simplify the proce...Programming Posted on 2025-04-08

How to Simplify JSON Parsing in PHP for Multi-Dimensional Arrays?Parsing JSON with PHPTrying to parse JSON data in PHP can be challenging, especially when dealing with multi-dimensional arrays. To simplify the proce...Programming Posted on 2025-04-08 -

How Can I Maintain Custom JTable Cell Rendering After Cell Editing?Maintaining JTable Cell Rendering After Cell EditIn a JTable, implementing custom cell rendering and editing capabilities can enhance the user experie...Programming Posted on 2025-04-08

How Can I Maintain Custom JTable Cell Rendering After Cell Editing?Maintaining JTable Cell Rendering After Cell EditIn a JTable, implementing custom cell rendering and editing capabilities can enhance the user experie...Programming Posted on 2025-04-08 -

How to Bypass Website Blocks with Python's Requests and Fake User Agents?How to Simulate Browser Behavior with Python's Requests and Fake User AgentsPython's Requests library is a powerful tool for making HTTP reque...Programming Posted on 2025-04-08

How to Bypass Website Blocks with Python's Requests and Fake User Agents?How to Simulate Browser Behavior with Python's Requests and Fake User AgentsPython's Requests library is a powerful tool for making HTTP reque...Programming Posted on 2025-04-08 -

How Can I UNION Database Tables with Different Numbers of Columns?Combined tables with different columns] Can encounter challenges when trying to merge database tables with different columns. A straightforward way i...Programming Posted on 2025-04-08

How Can I UNION Database Tables with Different Numbers of Columns?Combined tables with different columns] Can encounter challenges when trying to merge database tables with different columns. A straightforward way i...Programming Posted on 2025-04-08 -

How Can I Handle UTF-8 Filenames in PHP's Filesystem Functions?Handling UTF-8 Filenames in PHP's Filesystem FunctionsWhen creating folders containing UTF-8 characters using PHP's mkdir function, you may en...Programming Posted on 2025-04-08

How Can I Handle UTF-8 Filenames in PHP's Filesystem Functions?Handling UTF-8 Filenames in PHP's Filesystem FunctionsWhen creating folders containing UTF-8 characters using PHP's mkdir function, you may en...Programming Posted on 2025-04-08 -

Python Read CSV File UnicodeDecodeError Ultimate SolutionUnicode Decode Error in CSV File ReadingWhen attempting to read a CSV file into Python using the built-in csv module, you may encounter an error stati...Programming Posted on 2025-04-08

Python Read CSV File UnicodeDecodeError Ultimate SolutionUnicode Decode Error in CSV File ReadingWhen attempting to read a CSV file into Python using the built-in csv module, you may encounter an error stati...Programming Posted on 2025-04-08 -

Can You Use CSS to Color Console Output in Chrome and Firefox?Displaying Colors in JavaScript ConsoleIs it possible to use Chrome's console to display colored text, such as red for errors, orange for warnings...Programming Posted on 2025-04-08

Can You Use CSS to Color Console Output in Chrome and Firefox?Displaying Colors in JavaScript ConsoleIs it possible to use Chrome's console to display colored text, such as red for errors, orange for warnings...Programming Posted on 2025-04-08 -

Why Am I Getting a \"Class \'ZipArchive\' Not Found\" Error After Installing Archive_Zip on My Linux Server?Class 'ZipArchive' Not Found Error While Installing Archive_Zip on Linux ServerSymptom:When attempting to run a script that utilizes the ZipAr...Programming Posted on 2025-04-08

Why Am I Getting a \"Class \'ZipArchive\' Not Found\" Error After Installing Archive_Zip on My Linux Server?Class 'ZipArchive' Not Found Error While Installing Archive_Zip on Linux ServerSymptom:When attempting to run a script that utilizes the ZipAr...Programming Posted on 2025-04-08 -

Do I Need to Explicitly Delete Heap Allocations in C++ Before Program Exit?Explicit Deletion in C Despite Program ExitWhen working with dynamic memory allocation in C , developers often wonder if it's necessary to manu...Programming Posted on 2025-04-08

Do I Need to Explicitly Delete Heap Allocations in C++ Before Program Exit?Explicit Deletion in C Despite Program ExitWhen working with dynamic memory allocation in C , developers often wonder if it's necessary to manu...Programming Posted on 2025-04-08 -

How Can I Efficiently Create Dictionaries Using Python Comprehension?Python Dictionary ComprehensionIn Python, dictionary comprehensions offer a concise way to generate new dictionaries. While they are similar to list c...Programming Posted on 2025-04-08

How Can I Efficiently Create Dictionaries Using Python Comprehension?Python Dictionary ComprehensionIn Python, dictionary comprehensions offer a concise way to generate new dictionaries. While they are similar to list c...Programming Posted on 2025-04-08 -

Why Isn\'t My CSS Background Image Appearing?Troubleshoot: CSS Background Image Not AppearingYou've encountered an issue where your background image fails to load despite following tutorial i...Programming Posted on 2025-04-08

Why Isn\'t My CSS Background Image Appearing?Troubleshoot: CSS Background Image Not AppearingYou've encountered an issue where your background image fails to load despite following tutorial i...Programming Posted on 2025-04-08

Study Chinese

- 1 How do you say "walk" in Chinese? 走路 Chinese pronunciation, 走路 Chinese learning

- 2 How do you say "take a plane" in Chinese? 坐飞机 Chinese pronunciation, 坐飞机 Chinese learning

- 3 How do you say "take a train" in Chinese? 坐火车 Chinese pronunciation, 坐火车 Chinese learning

- 4 How do you say "take a bus" in Chinese? 坐车 Chinese pronunciation, 坐车 Chinese learning

- 5 How to say drive in Chinese? 开车 Chinese pronunciation, 开车 Chinese learning

- 6 How do you say swimming in Chinese? 游泳 Chinese pronunciation, 游泳 Chinese learning

- 7 How do you say ride a bicycle in Chinese? 骑自行车 Chinese pronunciation, 骑自行车 Chinese learning

- 8 How do you say hello in Chinese? 你好Chinese pronunciation, 你好Chinese learning

- 9 How do you say thank you in Chinese? 谢谢Chinese pronunciation, 谢谢Chinese learning

- 10 How to say goodbye in Chinese? 再见Chinese pronunciation, 再见Chinese learning